the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

CropSight-US: an object-based crop type ground truth dataset using street view and Sentinel-2 satellite imagery across the contiguous United States, 2013–2023

Zhijie Zhou

Chunyuan Diao

Accurate and scalable crop type maps are vital for supporting food security, as they provide critical information on the specific crops cultivated in a given area to inform agricultural decision-making and enhance crop productivity. The generation of these maps depends on high-quality crop type ground truth data, which are essential for developing remote sensing–based crop type classification models applicable across varying spatial and temporal contexts. Yet existing crop type ground truth datasets often focus on specific crop types of limited spatial and temporal ranges, constrained by the high cost and labor intensity of traditional field surveys. This limitation hinders their applicability to large-scale and multi-year applications, such as nationwide crop monitoring and long-term yield forecasting. Additionally, most existing crop type ground truth datasets contain only pixel-level labels without explicit field boundaries, impeding the extraction of field-level texture and structure information needed for accurate crop type mapping in heterogeneous agricultural landscapes. Collectively, these limitations hinder the development of scalable crop type mapping workflows and reduce the precision and reliability of resulting crop type maps for agricultural monitoring and decision support. In this study, we introduce CropSight-US, a novel national scale, object-based crop type ground truth dataset for the contiguous United States (CONUS) derived from street view and satellite imagery. This dataset spans the years 2013 to 2023 and includes over 100 000 crop type ground truth objects across 17 major crops and 294 Agricultural Statistics Districts, offering broad spatial and temporal coverage and high representativeness at field level. Each crop type ground truth object is accompanied by an uncertainty score that quantifies the confidence in its crop type identification, enabling users to filter or weight samples according to their specific reliability requirements. The crop type ground truthing framework of CropSight-US innovatively integrates crop labels derived from Google Street View imagery with field boundaries delineated from Sentinel-2 imagery to produce object-based crop type ground truth data. This scalable framework offers a valuable alternative to traditional field surveys by replacing in-person observations with virtual audits, significantly improving the efficiency, scalability, and cost-effectiveness of ground truth data collection. This framework achieves 97.2 % overall accuracy in crop type identification and 98.0 % F1 score in cropland field boundary delineation using the reference dataset. By delivering high-resolution, standardized, and reproducible reference data, CropSight-US establishes a new benchmark for crop type ground truthing and supports more informed agricultural research, monitoring, and decision-making. CropSight-US is available at https://doi.org/10.5281/zenodo.15702414 (Zhou et al., 2025).

- Article

(15776 KB) - Full-text XML

- BibTeX

- EndNote

Food security will face increasing pressure in the coming decades due to climate change, population growth, and limited resources. In response to these growing pressures, a variety of agricultural policies have been developed to promote more efficient crop management practices (e.g., crop rotation and intensification) and to boost crop productivity. Accurate crop type maps play a central role in guiding these policy decisions by providing detailed information on the specific crops cultivated in a given area. In recent years, advances in remote sensing, coupled with machine learning and deep learning techniques, have enabled the efficient generation of crop type maps (Cai et al., 2018; Weiss et al., 2020; You and Sun, 2022; Koukos et al., 2024). As essential training data for remote sensing-based classification models, crop type ground truth plays a critical role in crop type mapping by providing labeled examples of various crop species. These crop labels enable models to accurately classify crop types across large geographic regions by capturing the distinctive remote sensing signatures (e.g., spectral characteristics, phenological patterns, row or canopy structures, and harvesting timelines) associated with each crop type. Therefore, high-quality crop type ground truth datasets are essential for ensuring the accuracy of crop type maps and supporting a wide array of downstream agricultural applications (e.g., crop phenology monitoring, irrigation planning, and crop yield forecasting).

Existing crop type ground truth datasets generally fall into two categories. The first category encompasses the crop type labels collected directly through field surveys. As the most traditional approach, field surveys provide highly accurate crop type information through in-person observation. However, these field-collected ground truth datasets are often sparse, geographically uneven, and infrequently updated due to the high costs and labor demands of field data collection (Liu et al., 2012; Jolivot et al., 2021). In addition, field collection efforts typically capture only the dominant crop types within defined surveyed areas and time frames, often overlooking less prevalent crops or cultivation cycles that may be common in neighboring regions or fall outside the surveyed cultivation windows. These constraints limit the utility of such datasets in supporting the development of remote sensing–based crop classification models that generalize across different regions and growing seasons (Wang et al., 2022; Wu et al., 2022; McNairn and Jiao, 2024). The second category of crop type ground truth data is the crop type labels derived from existing crop type products, such as the Cropland Data Layer (CDL) for the United States (USDA, 2025a; Boryan et al., 2011) and EUCROPMAP for European countries (d'Andrimont et al., 2021; Ghassemi et al., 2022, 2024). With their wide spatial and temporal coverage, these crop type products supply proxy labels that stand in for costly field surveys and are increasingly used to train and validate crop classification models (Whitcraft et al., 2019; Tran et al., 2022; Becker-Reshef et al., 2023). This strategy enables the rapid generation of spatially extensive and temporally consistent ground truth data to support agricultural monitoring across diverse regions and growing seasons, which is advantageous in regions or periods with limited field observations. However, the accuracy and representativeness of these product-based “ground truth” data can vary considerably across crop types, regions, and years. Their quality depends on the reliability of the original field-based crop observations, the resolution and consistency of remote sensing inputs, and the robustness of the classification models used to produce the maps (Chivasa et al., 2017; Nowakowski et al., 2021). In addition, their utility is limited by delayed release schedules. Since existing crop type maps are typically generated using year-round satellite observations to capture complete phenological cycles (Wu et al., 2022; Yang et al., 2023, 2024), the “ground truth” for a given year often becomes available in following years (e.g., CDL is often released in the subsequent year). This delay limits their applicability for near-real-time crop mapping and subsequent time-sensitive applications such as in-season yield forecasting, pest and disease monitoring, or early warning systems that require more concurrent crop type information (Stehman and Foody, 2019; Wu et al., 2022; Liu et al., 2024).

In the practice of crop type mapping, object-based crop type ground truth data (e.g., at field- or parcel-level) is typically created by associating crop labels with delineated field boundaries to support more accurate and robust crop type classification. Compared to pixel-based labels, object-based labels provide a more detailed and spatially coherent representation of agricultural fields. By enabling the characteristics of both within-field features (e.g., spectral, textural, and shape attributes) and between-field relationships (e.g., connectivity, contiguity, proximity, and directional patterns), object-based crop type labels enhance the spatial context available to classification models and improve crop type mapping performance (Duro et al., 2012; Ok et al., 2012; Kussul et al., 2016; Li et al., 2016; Zhang et al., 2018; Waldner and Diakogiannis, 2020). In addition, for large-scale crop mapping with remote sensing technique, aggregating pixel-level information within object-based boundaries could help mitigate cloud contamination by increasing the availability of valid observations at the field level. However, the generation of object-based crop type ground truth dataset remains challenging due to the lack of explicitly defined field boundaries in most available datasets. Field survey-based datasets, typically collected through Global Positioning System-referenced observations, are generally coordinate-based and provide point-level crop type labels without associated field geometries (McNairn and Jiao, 2024). Crop product-based datasets, which are derived by sampling pixel-level crop type labels from existing crop type maps, similarly lack cropland field boundary delineations. Transforming either category into object-based ground truth requires extensive post-processing, such as reconciling point- or pixel-level labels with external field boundary datasets, a process that is often time- and resource-intensive, limiting the production of high-quality dataset across diverse regions. Alternatively, object-based products can be generated by aggregating classified pixels into polygons based on spatial and spectral similarity, as implemented in datasets such as the Crop Sequence Boundary (CSB) (Boryan et al., 2011; Abernethy et al., 2023; Hunt et al., 2024). However, these automated methods may introduce boundary uncertainties and misalignment with actual management units, particularly in heterogeneous landscapes. Consequently, both approaches still demand substantial computational resources and rigorous quality control to ensure reliability, complicating the scalable mapping of agricultural systems.

In this paper, we introduce CropSight-US, a novel national scale, object-based crop type ground truth dataset that provides representative and extensive crop type information across multiple years for the contiguous United States (CONUS). Covering 17 major crop types from 2013 to 2023 across 294 Agricultural Statistics Districts (ASDs) in CONUS, CropSight-US is generated using our proposed crop type ground truthing framework, which integrates crop type labels identified from Google Street View (GSV) imagery with field boundary information derived from high-resolution Sentinel-2 imagery. This novel framework enables the efficient collection of high-quality, object-based crop type ground truth information at large spatial and temporal scales by replacing the in-person field observations required in traditional surveys with virtual audits. With its built-in uncertainty quantification design, the collected CropSight-US dataset incorporates uncertainty information to support quality assessment and informed model development. Its object-based structure, which includes both crop type labels and delineated field boundaries, facilitates the training of crop type classification models that leverage field-level characteristics. As a major global agricultural production region, CONUS serves as a critical reference area for agricultural research and remote sensing applications. Its diverse cropping systems, shaped by a wide range of climates, soils, and management practices, make it an ideal setting for systematically building representative ground truth dataset of a diversity of crop types. This rich variability allows CropSight-US to support the development of generalizable crop classification models capable of robust mapping across diverse agricultural landscapes and effective transfer to data-scarce regions (Hao et al., 2020; Nowakowski et al., 2021; Koukos et al., 2024; Mai et al., 2025). Together, these features position CropSight-US as a foundational resource for advancing scalable, transferable, and reliable crop type mapping in practical and operational agricultural applications (e.g., precision agriculture practice, compliance monitoring systems).

The remainder of this paper is organized as follows: Sect. 2 introduces the study area and describes the CropSight-US data sources. Section 3 presents the four components of the crop type ground truthing framework used to develop the dataset, including (1) operational cropland field-view GSV metadata collection, (2) reference dataset (CropGSV-Ref) building, (3) crop type identification, (4) cropland field boundary delineation, along with the framework evaluation methods and production of the CropSight-US dataset. Section 4 evaluates the performance of the crop type ground truthing framework. Section 5 provides a comprehensive overview of the dataset, including its spatial and temporal coverage, crop type composition, and a Google Earth Engine (GEE)-enabled interface for user access. Section 6 concludes with a discussion of CropSight-US' merits, current limitations, future directions for improvement, and potential avenues for extending the dataset and its ground truthing framework.

2.1 Study Area

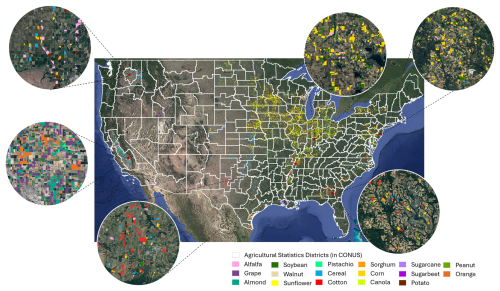

Our study focuses on CONUS, a globally significant agricultural production region. With its wide range of agroecological zones, climate regimes, and cropping systems, CONUS serves as a valuable reference area for agricultural research and remote sensing applications. CONUS' diverse environmental and agricultural management conditions have shaped the regional variation in dominant crops, such as corn and soybean prevalent in the Midwest, cotton and sorghum in the South, and a mix of specialty crops in parts of the West and Southeast (Fig. 1). This broad spectrum of cultivation practices and environmental conditions makes CONUS an ideal testbed for building operational ground truth datasets at large spatial and temporal scales. Our study period spans from 2013 to 2023, based on the availability and quality of street view imagery used for crop type ground truth collection.

2.2 Data

The primary data source used for crop type ground truth collection in this study is Google Street View (GSV) imagery, accessed through the Google Maps platform (Anguelov et al., 2010). Since its launch in 2008, GSV has expanded its coverage extensively across CONUS, offering a rich archive of high-resolution, georeferenced 360° panoramic images. These images are captured by camera-equipped vehicles that travel along public roads. Each GSV panoramic image is accompanied by rich metadata attributes, including the Pano ID, heading, latitude, longitude, month, and year. The Pano ID serves as a unique identifier for each panorama, while the heading indicates the direction the camera-equipped vehicle is facing when capturing the image. The latitude, longitude, month, and year associated with each panorama provide geographic and temporal context, which we used to filter, sample, and acquire the representative GSV imagery for subsequent crop type ground truth collection. GSV metadata and imagery are both retrieved using Google Street View Static API (https://developers.google.com/maps/documentation/streetview/overview, last access: 28 February 2026).

To support crop type ground truth collection, we integrate multiple datasets to collect GSV imagery from agricultural regions, with unobstructed view of croplands, under different irrigation regimes, and during optimal growing periods. First, we use the 10 m WorldCover land cover product (Zanaga et al., 2022) to identify GSV images falling within areas labelled as “cropland” or “tree cover,” which are more likely to capture agricultural landscapes. Derived from Sentinel-1 and Sentinel-2 imagery, WorldCover offers consistent 10 m global land cover information suitable for large-scale filtering. Second, road network data from OpenStreetMap (Haklay and Weber, 2008) are used to exclude GSV collected along U.S. primary roads and at major intersections, reducing the likelihood of visual obstructions such as overpasses or interchanges in the corresponding GSV imagery. Third, to ensure that the collected crop type ground truth reflects both irrigated and rainfed systems, we incorporate the Landsat-based Irrigation Dataset (LANID) (Xie et al., 2021) to guide the collection of GSV imagery, enabling the collection of representative samples under different irrigation management strategies. Lastly, we use USDA's Crop Progress Reports (CPRs) to guide the selection of GSV imagery collected during the region-specified crop growing seasons. CPRs (USDA, 2025b) offer weekly updates on crop development stages at the state level across the U.S., enabling us to define region-specific planting-to-harvest windows. Focusing on GSV imagery captured within these tailored windows helps exclude those images more likely to depict barren fields or off-season conditions, thereby streamlining the labeling process and improving the overall accuracy of crop type ground truth collection.

We use cloud-free, visible bands (RGB) of Sentinel-2 imagery to extract field boundaries that correspond to the crop labels identified from GSV imagery. Each GSV imagery captures crop conditions during a specific month of a given year. To correctly delineate the corresponding field boundary for that same period, we need satellite imagery that is both temporally aligned and cloud free. Sentinel-2's 10 m spatial resolution, combined with its frequent revisit times, enables the capture of field conditions that align with the timing of GSV collection, while also providing visually distinct boundary details to support accurate field delineation. When cloud cover, missing data, or seasonal mismatches limit the availability of suitable Sentinel-2 imagery, or for GSV imagery captured prior to full Sentinel-2 availability (before 2017), we supplement with high-resolution aerial photographs from the National Agriculture Imagery Program (NAIP) via Google Earth Engine (USDA, 2003). NAIP images, typically at 1 m resolution, are captured during the agricultural growing season across the U.S. and are selected based on acquisition dates closest to the corresponding GSV images.

The CSB dataset is used as a benchmark product for evaluating our crop type ground truthing framework due to its nationwide coverage and object-level crop type labels. CSB is generated from pixel-level Cropland Data Layer (CDL) data through a vectorization process that leverages multiple years of CDLs to delineate homogeneous field objects (Boryan et al., 2011; Abernethy et al., 2023; Hunt et al., 2024). With its object-based crop type information, CSB has been widely used in applications that track crop rotations and require stable field boundaries over time (Castle et al., 2025; Renwick et al., 2025; Sohl et al., 2025).

This section outlines the methodological workflow for generating CropSight-US, a nationwide object-based crop type ground truth dataset spanning 2013 to 2023. The workflow consists of three main components: the development of the crop type ground truthing framework, its evaluation, and the subsequent production of the CropSight-US dataset (Fig. 2). The crop type ground truthing framework contains four major components: (1) Operational Cropland Field-View GSV Metadata Collection (Sect. 3.1.1): This component collects the metadata information of all historical geotagged cropland field-view GSV images that are suitable for crop type ground truth retrieval at large scales in an operational fashion. (2) Object-based Reference Dataset Building (Sect. 3.1.2): This step constructs a high-quality object-based reference dataset (CropGSV-Ref) to support the development of crop type identification and cropland field boundary delineation models. To ensure the reference dataset captures a wide range of farming conditions, samples are selected from collected cropland field-view GSV metadata across different regions, with stratification based on the extent of cropland/tree cover and irrigated land. The finalized CropGSV-Ref dataset contains cropland field-view GSV imagery with manually labeled crop types and corresponding Sentinel-2 imagery with annotated field boundaries. (3) Crop Type Identification (Sect. 3.1.3): An uncertainty-aware crop type classification model, CONUS-UncertainFusionNet, is developed to identify crop types from field-view GSV imagery using the labeled field-view GSV imagery from CropGSV-Ref. The model combines Vision Transformer (ViT-B16) and ResNet-50 to capture both global patterns and local plant details in field-view GSV imagery, and incorporates a Bayesian design to generate crop type predictions along with uncertainty estimates; (4) Cropland Field Boundary Delineation (Sect. 3.1.4): Segment Anything Model (SAM) is adopted and fine-tuned using field boundary annotations from CropGSV-Ref and applied to extract cropland field boundaries from Sentinel-2 imagery corresponding to each cropland field-view GSV imagery. Leveraging SAM's prompt-based design, geotagged coordinates from each cropland field-view GSV imagery serve as spatial prompts to guide boundary delineation specific to each field-view location. The performance of the crop type ground truthing framework (Sect. 3.2) is evaluated from three perspectives, including the accuracy in crop type classification from cropland field-view GSV imagery, the effectiveness in delineating cropland field boundaries using Sentinel-2 imagery, and the reliability of the collected object-based crop type information in comparison with that from the CSB benchmark product. Finally, the CropSight-US dataset (Sect. 3.3) is generated by applying the developed crop type ground truthing framework across CONUS. The resulting product provides object-based crop type information including crop type labels associated with classification uncertainty and delineated cropland field boundaries.

Figure 2Methodological workflow for generating the object-based crop type ground truth dataset CropSight-US across CONUS. Map data © 2024 Google. GSV images are from Imagery © 2024 Google Maps, and Sentinel-2 imagery thumbnails are from Imagery © 2024 European Union/ESA/Copernicus Sentinel-2, processed via Google Earth Engine.

3.1 Crop Type Ground Truthing Framework

3.1.1 Operational Cropland Field-View GSV Metadata Collection

The operational collection method is designed to systematically extract cropland field-view metadata from the extensive GSV database to enable large-scale crop type labeling. Firstly, raw GSV metadata across CONUS are collected by dividing the region into 10 km grid cells and querying available GSV metadata within each grid cell. This grid-based batch processing approach streamlines the acquisition of large volumes of raw GSV metadata and facilitates efficient data management. Each raw GSV metadata record includes the geographic coordinates, month-year of imagery capture, and heading information for the vehicle which collects the GSV imagery. Secondly, to ensure that the collected GSV metadata accurately correspond to cropland field-view GSV imagery suitable for crop type identification, a series of spatial and temporal filters are applied. For spatial filtering, raw GSV metadata located along U.S. primary roads or near road junctions are removed based on road network information from OpenStreetMap, as such locations often include roadway medians, signage, or traffic infrastructure that obstruct clear views of adjacent fields. In addition, since each raw GSV metadata is collected on the roads and linked to a panoramic image capturing views on both sides of the road, the image coordinates are offset by 50 m in both roadside directions to approximate the actual cropland field-view locations (Liu et al., 2024). These offset coordinates are then filtered using the WorldCover land cover map to ensure that the corresponding GSV imagery depicts agricultural landscapes (i.e., cropland field-view GSV imagery). Specifically, a 100 m-radius buffer is applied around each location, and those lacking cropland or tree cover pixels within the buffer are excluded from the dataset. For temporal filtering, crop-specific crop progress calendars from the USDA are referenced to define regional crop growth windows at the state level, and only raw GSV metadata captured within these crop-specific growing seasons are retained, ensuring that their corresponding GSV imagery are temporally aligned with periods when vegetation is present and crop type is visually distinguishable with its unique features and cultivation patterns. The final cropland field-view GSV metadata contain in-field location coordinates, acquisition month and year, and the heading direction of each cropland field-view GSV imagery ready to be retrieved. Section 5.1 summarizes the overall spatial and temporal distribution of the collected cropland field-view metadata. Access to GSV imagery through the Google Maps Platform and Static Street View API end points incur modest and transparent costs. Static Street View image requests are billed at USD 7 per 1000 requests for usage up to 100 000 requests and USD 5.60 per 1000 requests for usage between 100 001 and 500 000 requests, following a monthly free quota of 10 000 requests per user. In contrast, Street View Metadata requests are free of charge and not subject to usage limits.

3.1.2 Object-based Reference Dataset Building

To support the development of the crop type ground truthing framework, we manually construct a high-quality reference dataset CropGSV-Ref with object-based crop type information. This reference dataset consists of geotagged cropland field-view GSV imagery paired with crop type labels (i.e., the GSV component), along with corresponding Sentinel-2 imagery linked to annotated cropland field boundary polygons (i.e., the field boundary component).

First, we select a subset of cropland field-view GSV metadata using a stratified, spatially adaptive sampling strategy to ensure broad geographic coverage and capture agricultural diversity. The core idea of this strategy is to determine the sample size for each ASD by accounting for two key factors: the extent of cultivated land and the distribution of irrigation practices. The sampling density is increased in regions with intensive agricultural activities to ensure sufficient representation, while the sampling strategy also captures variability in land management practices across the CONUS. We begin by setting a total sample size of 150 000 images to balance broad spatial coverage with computational feasibility. This total is proportionally distributed across ASDs based on their share of cultivated land, estimated using the fraction of cropland pixels in the WorldCover land cover product. The result is a target number of samples for each ASD, ensuring that regions with more extensive agricultural activities are more heavily represented. To further reflect differences in management practices, we stratify each ASD's sample target into irrigated and rainfed components. The relative allocation between these components is derived from the LANID dataset, using the estimated proportion of irrigated and rainfed cropland within each ASD. Following stratification, we implement a fishnet-based adaptive grid sampling method to ensure spatial diversity and reduce sample clustering within both irrigated and rainfed categories. Clustering is particularly likely in areas with dense road networks or uneven GSV coverage, where cropland field-view GSV metadata tend to be concentrated. To enable this stratified sampling, we first assign irrigation status to each cropland field-view GSV metadata by analyzing LANID values within a 50 m semi-circular buffer oriented along the GSV heading. This allows us to associate each cropland field-view GSV metadata with the dominant irrigation condition of the field it represents and classify it into either the irrigated or rainfed stratum. Sampling is then conducted independently for irrigated and rainfed strata. A uniform 10 km grid is first overlaid on each ASD, and the grid cell size is iteratively adjusted until the number of cells containing at least one valid cropland field-view GSV metadata aligns with the stratified sample target for the irrigated or rainfed stratum. From each qualifying grid cell, one cropland field-view GSV metadata is randomly selected. By explicitly linking sample allocation to cropland extent, irrigation distribution, and spatial coverage, this strategy yields a robust, well-distributed, and management-aware set of cropland field-view GSV metadata. These sampled cropland field-view GSV metadata are then used to retrieve cropland field-view GSV imagery via the Google Street View API to build the CropGSV-Ref dataset.

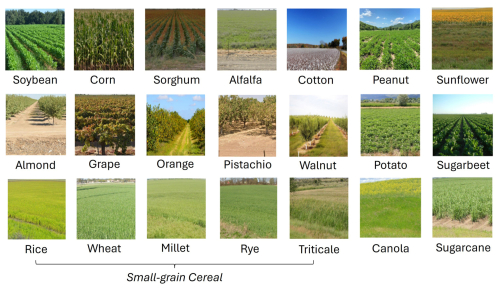

After retrieving the sampled cropland field-view GSV imagery, we construct the GSV component of the CropGSV-Ref dataset through a systematic manual labeling process. Crop type annotations are conducted with guidance from a plant biologist and supported by external resources such as iNaturalist (Van Horn et al., 2018). The resulting dataset includes 17 dominant crop type classes (Fig. 3), including soybean, corn, sorghum, alfalfa, cotton, peanut, sunflower, almond, grape, orange, pistachio, walnut, potato, sugarbeet, (small-grain) cereal, canola, sugarcane, along with an “others” category depicting non-agricultural scenes, rare crops or those lacking identifiable crop features. Given the visual similarity of small-grain cereals in field-view imagery, we group rice, wheat, and other cereals (e.g., millet, rye, triticale) into a single category to reduce labeling ambiguity (Huang et al., 2022; Peña-Barragán et al., 2011). For each crop type, we label up to 2500 high-quality GSV images. Images are excluded from the dataset if they show poor lighting, motion blur, or occlusion features. This labeling protocol ensures that the crop type annotations used in model training and evaluation are both visually interpretable and agronomically meaningful across diverse U.S. cropping systems.

Figure 3Examples of the GSV component of the CropGSV-Ref reference dataset showcasing the cropland field-view GSV images of 17 crop types (GSV images are from Imagery © 2024 Google Maps).

To build the field boundary component of CropGSV-Ref, we collect corresponding satellite imagery for each GSV image labeled with a crop type. For each labeled GSV image, we identified the highest quality, least cloudy Sentinel 2 L2A scene within a ±1 month window around the GSV capture month (based on GSV Month–Year metadata) to ensure temporal alignment and consistent vegetation conditions. We then extract the corresponding 512 × 512 pixel image tile centered on the GSV's in-field location coordinate. When Sentinel-2 imagery is unavailable or compromised by cloud cover or seasonal mismatch, we supplement with NAIP aerial imagery, selecting acquisitions closest in date to maintain phenological consistency. To reduce manual effort in field boundary delineation, we apply the base SAM model using the coordinates of the cropland field-view imagery as point prompts to generate initial boundary predictions. These preliminary outputs are manually refined to ensure spatial accuracy and consistency. During manual boundary delineation, we focus on productive boundaries, which are the divisions between different crop types that may exist within a single field. This approach ensures that delineation reflects crop-specific management practices rather than relying solely on visible physical separations. In cases of unclear boundaries due to mixed cropping or low contrast, multi-temporal imagery from the growing season is used to verify field extents.

Each annotated sample in the CropGSV-Ref dataset includes a geotagged cropland field-view GSV image, an assigned crop type label, the corresponding acquisition date (year and month), a linked Sentinel-2 satellite image, and a digitized cropland field boundary polygon. With extensive spatial coverage and accurate semantic labeling, the reference dataset supports robust development and assessment of the object-based crop type ground truthing framework throughout CONUS. CropGSV-Ref is partitioned into three splits: training (60 %), validation (20 %), and testing (20 %). The training and validation splits are used to develop the two key components of the framework: CONUS-UncertainFusionNet for crop type classification (Sect. 3.1.3) and the fine-tuned SAM model for cropland field boundary delineation (Sect. 3.1.4). The testing split is used to evaluate the overall performance of the framework in collecting object-based crop type ground truth information (Sect. 3.2).

3.1.3 Crop Type Identification

To retrieve the crop type labels from cropland field-view imagery, we develop CONUS-UncertainFusionNet, an uncertainty-aware crop type classification model tailored for the CONUS region. This model extends the original UncertainFusionNet crop type identification model by incorporating a broader range of crop types from CropGSV-Ref and extending its applicability from regional to nation-wide scale (Liu et al., 2024). UncertainFusionNet was selected for its ability to fuse multi-scale visual features, combining fine-grained textures and global spatial patterns while explicitly modeling prediction uncertainty. Unlike standard deterministic classifiers, it provides not only accurate predictions but also confidence estimates, which are crucial in heterogeneous agricultural landscapes where mixed cropping, visual ambiguity, or suboptimal imagery may challenge conventional models. Its Bayesian formulation enables uncertainty quantification at the image level, improving interpretability, guiding downstream decision-making, and supporting the construction of more reliable datasets across diverse agricultural environments.

CONUS-UncertainFusionNet comprises two primary components: (1) a feature fusion module, and (2) a Bayesian classification module (Fig. 4). The feature fusion module is designed to extract rich visual representations from field-view GSV imagery by integrating two complementary backbones, namely ResNet-50 and ViT-B16. ResNet-50 (He et al., 2016), a convolutional neural network with residual connections, is employed to extract hierarchical visual features, e.g., texture, edge patterns, and spatial structures at the field level, which are important cues for distinguishing crop types (Wang et al., 2019). In parallel, ViT-B16 (Dosovitskiy et al., 2021) can capture global spatial relationships by encoding the image as a sequence of linearly embedded non-overlapping patches. The outputs from these two backbone networks are concatenated to form a unified feature representation to integrate both fine-grained visual cues and broader spatial context, enabling more comprehensive characterization of the input imagery, which are critical for accurate crop type classification in heterogeneous agricultural landscapes. This fused representation is then passed to the Bayesian classification module, which consists of two fully connected layers, each followed by dropout. Rather than producing a single deterministic output, the module estimates a predictive distribution over crop type classes by aggregating output probabilities, , across the posterior distribution of model parameters (Eq. 1). Specifically, each forward pass through the network yields class-wise probabilities via a softmax activation (Bridle, 1990), which transforms the model's raw outputs into a normalized probability distribution over crop type classes, and multiple stochastic passes (enabled by dropout) are used to approximate the posterior predictive distribution.

where x* denotes an input image, and y* denotes the corresponding output of the neural network model constructed with the training data X and Y (label). y* is a vector comprising elements , with in this vector denoting the probability of class c, obtained through the softmax function. θ represents the set of weight parameters of the trained neural network model.

Figure 4Architecture of CONUS-UncertainFusionNet, featuring dual-branch feature fusion (ResNet-50 and ViT-B16) and a Bayesian classifier for crop type prediction with uncertainty estimation (entropy and variance). The GSV image shown for architecture illustration is from Imagery © 2024 Google Maps.

To estimate the predictive distribution, Monte Carlo (MC) dropout (Gal and Ghahramani, 2016) is employed to approximate Bayesian inference by performing multiple stochastic forward passes. Each pass samples a different set of active neurons due to dropout, effectively simulating a different model instance. This process yields a set of class probability vectors (via the softmax function), which are then aggregated to approximate the predictive distribution over crop type classes (Eq. 2).

where T is the number of stochastic forward pass predictions, and θt denotes sampled model weights.

Based on this predictive distribution, we quantify uncertainty in crop type identification using two statistical measures: entropy and variance (Abdar et al., 2021; Gour and Jain, 2022; Arco et al., 2023; Liu et al., 2024). Entropy H quantifies the degree of disorder or ambiguity in the class probability vector, with higher entropy indicating more unpredictability in the model's outputs. Variance σ2 captures the variability of predicted probabilities across the T predictions, providing a measure of the model's output stability. To filter out predictions with high uncertainty, CONUS-UncertainFusionNet applies thresholds to both entropy and variance (Abdar et al., 2021; Gour and Jain, 2022; Arco et al., 2023). These thresholds are determined by analyzing the intersection points of uncertainty metrics' density distributions for both correctly and wrongly identified predictions across all classes during CONUS-UncertainFusionNet training process (Fig. 4). This approach ensures an optimal tradeoff between classification accuracy and the number of field-view images retained for ground truth collection. The filtering rule is expressed with an indicator function (Eq. 3).

where an output of 1 signifies that a prediction has relatively high confidence and should be retained. An output of 0 indicates that a prediction has relatively high uncertainty and should be removed.

To enhance uncertainty-awareness of CONUS-UncertainFusionNet, we adopt a composite loss function (Eq. 4) combining cross-entropy with predictive entropy. This loss guides the training process by encouraging confident predictions for well-classified samples while maintaining uncertainty for ambiguous cases, ultimately improving the separability of uncertainty distributions between correct and incorrect classifications.

where tic is 1 when c is the index of correct class for the ith cropland field-view GSV image, otherwise it is 0. denotes the model's predicted probability that the ith field-view image belongs to class c. N is the total number of all field-view images, and C is the number of classes.

CONUS-UncertainFusionNet is trained on the training split of GSV component of CropSight-Ref dataset, covering diverse crop types across CONUS (Fig. 3). Both ResNet-50 and ViT-B16 within the feature fusion module are initialized with ImageNet pre-trained weights to leverage transferable visual representations. Model hyperparameters, including network architecture, learning rate, and number of training epochs, are selected by optimizing performance on the validation split, to ensure robustness and generalizability. Specifically, the network is optimized using stochastic gradient descent (SGD) with a learning rate of 0.001, momentum of 0.9, batch size of 18, and trained for 150 epochs with early stopping based on validation loss (Gupta et al., 2021; Gour and Jain, 2022; Liu et al., 2024). To ensure uncertainty metrics provide a consistent confidence standard across different agricultural contexts, we determine global thresholds using the validation split. By plotting the density distributions of entropy and variance for correctly and incorrectly classified samples aggregated across all crop types (Fig. 5), we identify the intersection points as the optimal high-confidence thresholds (entropy < 0.032187, variance < 0.000128) are determined using the validation split of the GSV component in CropGSV-Ref. This approach defines a mathematically absolute equilibrium where the metrics are directly comparable between classes; for example, a specific entropy value represents the same level of model ambiguity whether the input is a corn or soybean field, facilitating uniform data filtering for downstream applications. All experiments are conducted on the Illinois Campus Cluster Program (ICCP) at the University of Illinois Urbana–Champaign. The computational infrastructure consists of dual AMD EPYC 7763 processors, 512 GB of system memory, and four NVIDIA A100 GPUs with 80 GB of GPU memory each, connected through 25G high speed networking nodes.

Figure 5Distributions of uncertainty scores for correctly (blue) and incorrectly (orange) classified samples produced by the CONUS-UncertainFusionNet model. The uncertainty threshold is determined at the intersection point of the two density curves, representing the optimal separation between high- and low-confidence predictions.

3.1.4 Cropland Field Boundary Delineation

In parallel, to obtain object-based crop type ground truth, the Segment Anything Model (SAM) is fine-tuned to delineate cropland field boundaries corresponding to the crop type labels extracted from the cropland field-view GSV imagery. SAM is chosen for its flexibility and strong performance and generalization capabilities across diverse segmentation tasks, making it a promising foundation for adapting to satellite imagery (Osco et al., 2023). SAM consists of three main components (Fig. 6): an image encoder that extracts high-dimensional visual features (image embedding), a prompt encoder that embeds user-provided inputs (in our case, using the coordinate of each cropland field-view GSV as the point prompt), and a mask decoder that generates segmentation masks via two-way cross-attention (Kirillov et al., 2023). While SAM performs well on natural images, its effectiveness on satellite imagery is limited due to weaker spectral contrast at field boundaries and increased scene complexity (Osco et al., 2023; Ferreira et al., 2025; Sumesh et al., 2025). To address this limitation, we fine-tune SAM's mask decoder using the training split of the field boundary component of CropGSV-Ref, while keeping the image and prompt encoders frozen. This decoder-only adaptation allows SAM to recalibrate its boundary representations to Sentinel-2 imagery, effectively bridging the resolution and spectral domain gap without requiring full model retraining. Prior studies have shown that this approach is a computationally efficient strategy that significantly improves SAM's performance on cropland field boundary segmentation (Liu et al., 2024; Pu et al., 2025).

Figure 6Structure of the SAM with cropland field-view GSV location coordinate as the point prompt for cropland field boundary delineation from Sentinel-2 imagery (© 2024 European Union/ESA/Copernicus Sentinel-2, processed via Google Earth Engine).

Fine-tuning of SAM is conducted using a novel loss function (Eq. 7) that integrates the confidence score loss and the Dice score loss (Milletari et al., 2016). The confidence score loss calculates the mean squared error between SAM's estimated Intersection over Union (IoU) and the actual IoU (Eq. 5), to quantify mask overlap accuracy. The Dice score loss measures the degree of overlap between predicted and ground truth cropland field boundary masks by computing the average difference between 1 and the Dice score (Eq. 6) for all cropland boundary masks. Combining these two losses allows the model to simultaneously improve segmentation accuracy and its ability to estimate prediction reliability, an approach supported by recent studies that highlight the benefits of multi-loss strategies for balancing competing objectives in deep learning segmentation tasks (Terven et al., 2025). IoU is the ratio of the area of overlap between the predicted and ground truth masks to the area covered by the union of both masks. We determine the hyperparameters using the validation split of the field boundary component of CropGSV-Ref, optimizing with the Adam using a learning rate of and a weight decay of 0.01. Fine-tuning is conducted with a batch size of 1 for up to 50 epochs, with early stopping based on the performance on the validation split to prevent overfitting.

where P is the predicted cropland field boundary mask, G is the annotated ground truth cropland field boundary mask from the field boundary component of CropGSV-Ref, |P∩G| is the area of intersection between the predicted and ground truth masks, |P∪G| is the area of their union, and N represents the number of ground truth cropland field boundaries.

3.2 Evaluation of the Crop Type Ground Truthing Framework

To assess the reliability of the crop type ground truthing framework, we evaluate its performance using the testing split of CropGSV-Ref in comparison with benchmark models and products from three perspectives: (1) the accuracy of crop type identification from cropland field-view GSV imagery is assessed using the GSV component of CropGSV-Ref; (2) the effectiveness of cropland field boundary delineation from Sentinel-2 imagery is assessed using the field boundary component of CropGSV-Ref; (3) the extracted object-based crop type information is evaluated using the CropGSV-Ref as object-based crop type ground truth.

To assess the efficacy of CONUS-UncertainFusionNet in identifying crop types from cropland field-view imagery, we compare it against three benchmark models: ResNet-50, ViT-B16, and CONUS-FusionNet. ResNet-50 and ViT-B16 serve as strong single-backbone baselines representing advanced convolution-based and transformer-based feature extraction modeling approaches, respectively. To ensure a fair comparison, both ResNet-50 and ViT-B16 are initialized with ImageNet pre-trained parameters and fine-tuned on the training and validation splits of the GSV component of CropGSV-Ref. CONUS-FusionNet shares the same dual-backbone architecture as CONUS-UncertainFusionNet but excludes uncertainty modeling, relying solely on cross-entropy loss and deterministic predictions without MC dropout. We evaluate all models using standard classification accuracy metrics: precision, recall, F1-score, and overall accuracy on the testing split of the GSV component of CropGSV-Ref. Precision (Eq. 8) is the ratio of true positives (TP) to the sum of TP and false positives (FP), reflecting the model's ability to avoid false crop-type assignments. Recall (Eq. 9) is the ratio of TP to the sum of TP and false negatives (FN), indicating how well the model captures all relevant instances of each crop type. The F1-score (Eq. 10), the harmonic mean of precision and recall, balances these two aspects. We compute these metrics per crop type and report both individual and average crop type metric values. Overall accuracy (Eq. 11) measures the proportion of all correct predictions, providing a general assessment of model performance across all crop types. Together, these metrics offer a robust assessment of the models' ability in classifying crop types from cropland field-view GSV imagery.

To evaluate the fine-tuned SAM model's ability to delineate cropland boundaries from Sentinel-2 images, we compare its performance in field boundary delineation against the base SAM model and the field boundary polygons from the CSB dataset. We evaluate the field boundaries extracted with the base SAM, CSB, and fine-tuned SAM models using the testing split of the field boundary component of CropGSV-Ref. Performance is assessed at the object level using precision (Eq. 8), recall (Eq. 9), and F1 score (Eq. 10), based on the spatial overlap between predicted and ground truth cropland boundaries. An IoU threshold of 0.50 is used to determine whether a predicted object is correctly identified (Eq. 5) (He et al., 2017, 2022; Stewart et al., 2020; Braga et al., 2020; Li et al., 2021; Jong et al., 2022; Mei et al., 2022; Gan et al., 2023; Shao et al., 2024). Specifically, predictions with IoU > 0.5 are considered true positives (TP); predictions with 0 < IoU ≤ 0.5 are classified as false positives (FP) due to partial but incorrect overlap; and predictions with IoU = 0 are treated as false negatives (FN), indicating complete detection failure.

To assess the reliability of our crop type ground truthing framework, we evaluate the object-based crop type information it produces in comparison with those from the CSB dataset using the testing split of the CropGSV-Ref. Performance is measured using overall accuracy across all crop types. A crop type object is considered correctly matched only when both the crop type label is accurate (the predicted crop type, CropTypepred, corresponds to the ground truth crop type, CropTypetrue), and the cropland field boundary delineation meets the IoU threshold of 0.5 (Eq. 12). To quantify object-level performance, we calculate the percentage of matched objects (labeled as 1 for a correct match and 0 otherwise) for both crop type ground truthing framework and the CSB dataset, with respect to the testing split of the CropGSV-Ref.

3.3 Production of CropSight-US Dataset

To generate the CropSight-US dataset, we apply the developed crop type ground truthing framework across the entire CONUS. To ensure that the resulting dataset provides broad spatial coverage and captures the diversity of agricultural conditions nationwide, we implement an automated dataset production pipeline to process all available cropland field-view GSV metadata from 2013 to 2023. Since GSV panoramic imagery is typically captured at short spatial intervals (approximately every 10 m), multiple metadata records may correspond to the same agricultural field. To reduce redundancy and prevent repeated ground truthing of the same field, we first spatially link each cropland field-view GSV metadata to cropland field boundaries defined in the CSB dataset. Then, within each CSB-identified field, one cropland field-view GSV metadata is randomly selected to serve as its representative, ensuring spatial uniqueness. Next, to guide the sampling process across crop types, we quantify their spatial distribution by counting the number of retained cropland field-view GSV metadata associated with CSB-identified fields labeled with that crop type. This provides a proxy for evaluating crop street view imagery availability and geographic spread across ASDs. We compute the average amount of metadata per ASD per crop type to establish a baseline for balanced representation, which takes into account crop GSV availability, cultivated extent, and irrigation practices for each ASD. For each crop type and year, all metadata are retained in ASDs with fewer samples than the ASD-average amount of metadata. In ASDs exceeding the average, we retain the baseline number plus a proportionally sampled subset of additional metadata, based on the total number of CSB-linked records for that crop type within the ASD. Finally, to ensure representation of irrigation practices, we stratify the retained samples within each ASD by irrigation status. Specifically, we calculate the proportion of irrigated and non-irrigated fields and set the selection targets for each ASD accordingly, preserving the relative distribution of irrigation types within each crop-ASD combination. The crop-wise cultivated area and irrigation information inform ASD-level sampling targets for each crop type in the final application dataset. Based on these targets, we apply the spatially adapted sampling strategy (Sect. 3.1.2) to select specific cropland field-view GSV to be retrieved and processed, with crop type label identified by CONUS-UncertainFusionNet with uncertainty information, and cropland field boundary delineated by our fine-tuned SAM. Through this approach, the CropSight-US dataset achieves broad and evenly distributed spatial coverage while maintaining scalability and crop-type balance for nationwide agricultural monitoring. To ensure these distances reflect functional management units rather than classification artifacts, we dissolved adjacent CSB polygons sharing the same 2023 crop type label prior to sampling. This analysis allowed us to assess CropSight-US' representativeness across diverse landscape configurations and field accessibility conditions throughout the CONUS.

For each selected cropland field-view GSV metadata, we retrieve the corresponding field-view GSV image, and the least cloudy Sentinel-2 image in or closest to the month of GSV acquisition. We predict the crop type label from the field-view GSV image with associated uncertainty metrics using the CONUS-UncertainFusionNet model and delineate the cropland field boundary from the Sentinel-2 or NAIP (as substitute when high quality, least-cloud Sentinel-2 imagery is not available) imagery using the fine-tuned SAM model. Each entry in the generated CropSight-US ground truth dataset includes the predicted crop type, the associated confidence metrics (entropy, variance, and confidence level), the delineated cropland field boundary, and the year and month when the original GSV image is captured. Section 5.2 summarizes the spatial distribution, temporal coverage, and crop-type composition of the CropSight-US dataset.

4.1 Crop Type Identification

CONUS-UncertainFusionNet outperforms all other benchmark models on the test split of the GSV component of the CropGSV-Ref (Table 1), with a precision of 97.24 %, recall of 97.17 %, F1-score of 97.18 %, and overall accuracy of 97.22 %. Compared to its backbone architectures, ResNet-50 and ViT-B16, it achieves approximately 4 % higher scores across all evaluation metrics. This improvement demonstrates a more robust characterization of the structured and heterogeneous visual patterns in cropland field-view GSV imagery, by combining ResNet's strength in capturing fine-grained local features with ViT's capacity for modeling long-range spatial relationships. Compared to CONUS-FusionNet, CONUS-UncertainFusionNet shows an approximate 2 % improvement across all evaluation metrics, highlighting the benefits of incorporating uncertainty estimation in the crop type classification pipeline. By explicitly modeling prediction uncertainty, it more effectively handles challenging scenarios common in GSV imagery, such as occlusions, indistinct field boundaries, poor image quality, and visually similar crop types, ultimately improving classification robustness under real-world conditions.

Table 1Performance evaluation of CONUS-UncertainFusionNet and benchmark models (i.e., ResNet-50, ViT-B16, CONUS-FusionNet) based on the test split of the GSV component of the CropGSV-Ref dataset. The bold font indicates the best-performing model for each metric.

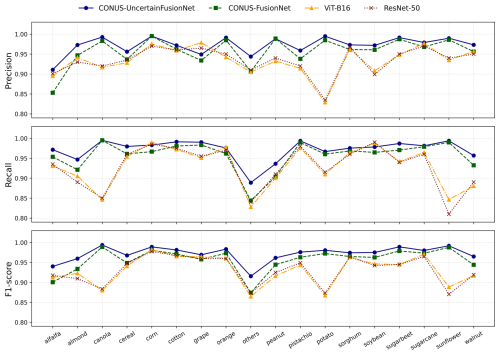

As shown in the class-wise evaluation (Fig. 7), CONUS-UncertainFusionNet consistently outperforms all benchmark models in crop type classification across nearly all categories. It achieves precision, recall, and F1-scores above 0.95 for most crop types, demonstrating strong robustness and generalizability under diverse agricultural conditions. All models perform well on crops such as corn, soybean, cereal, grape, and sorghum, likely due to their distinct visual characteristics and consistent field patterns, which facilitate reliable identification. ViT-B16 and ResNet-50, however, show significant drops in performance for relatively less represented crops like canola, potato, and sunflower, with F1-scores falling below 0.88. This decline is likely due to overlapping visual features and subtle spectral or textural differences, which complicate classification. CONUS-UncertainFusionNet maintains high accuracy on these challenging classes, frequently achieving F1-scores above 0.95. Its robustness is attributed to the integration of uncertainty-aware design, which enables the model to flag and discard low-confidence predictions, reducing misclassifications in ambiguous scenarios. CONUS-FusionNet, while generally effective, shows slightly lower accuracy on visually confounding crop types due to the absence of uncertainty modeling. These results underscore the superior labeling ability of CONUS-UncertainFusionNet across both common and difficult-to-classify crop types, demonstrating its reliability and robustness in operational crop identification tasks.

Figure 7Class-wise performance evaluation of CONUS-UncertainFusionNet and benchmark models (ResNet-50, ViT-B16, and CONUS-FusionNet) in classifying 17 crop types and one “others” class across the CONUS region. Evaluation metrics include F1-score, precision, and recall, calculated using the test split of the GSV component from the CropGSV-Ref reference dataset.

4.2 Cropland Field Boundary Delineation

As shown in Table 2, the fine-tuned SAM significantly outperforms both the base SAM and the CSB benchmark in cropland field boundary delineation, achieving the highest precision (0.9601), mean IoU (0.9452), and F1 score (0.9801). Among the three methods, the base SAM yields the lowest performance, with a precision of 0.6564 and an F1 score of 0.7926. This lower performance is largely due to the base SAM's original design for general-purpose segmentation of natural images, which is not tailored to distinguish subtle differences between adjacent agricultural parcels in satellite imagery. The CSB benchmark shows slightly better performance than the base SAM, with the F1 score of 0.8375, but still falls short of the fine-tuned SAM. The superior performance of the fine-tuned SAM can be attributed to the domain-specific fine-tuning and task-adapted prompt design. Fine-tuning enables the model to better capture the structural and texture characteristics unique to agricultural landscapes, while the use of strategically placed point prompts near probable field boundaries guides the model's attention to critical spatial discontinuities (e.g., linear edges and subtle textural contrasts) that are frequently overlooked by models trained on general-purpose datasets.

Table 2Performance of fine-tuned SAM and benchmark models/products in cropland field boundary delineation. The evaluation excludes the “Others” class (500 samples in the test split of CropGSV-Ref), as this category includes non-agricultural landscapes such as forests or built-up areas in the GSV component of CropGSV-Ref, which are not always associated with distinct cropland boundaries.

Visual comparison reveals distinct differences in cropland field boundary delineation quality among the boundaries from CSB and those generated by the base and fine-tuned SAM models (Fig. 8). The fine-tuned SAM produces cohesive and complete field boundaries that closely align with ground truth annotations. In contrast, the base SAM frequently generates incomplete or imprecise boundaries when applied directly to satellite imagery without fine-tuning. This limitation stems from its original design for natural image segmentation, which leads to over-segmentation in agricultural imagery by misinterpreting subtle field edges or texture variations as distinct object boundaries. The boundaries retrieved from the CSB product are more variable. In some cases, they are smaller than the actual field extent, while in others, they appear overly generalized. This variability likely arises from the CSB's boundary generation strategy, which relies on multi-year CDL composites and rule-based vectorization techniques. Such an approach can introduce spatial mismatches with current field configurations due to temporal averaging and generalized assumptions about field boundaries. In summary, both accuracy metrics and visual comparisons consistently demonstrate that the fine-tuned SAM more effectively captures cropland field boundaries corresponding to the cultivated crops identified from the cropland field-view GSV images, outperforming both the base SAM and the CSB benchmark.

Figure 8Examples of annotated crop field boundaries and boundary delineation outputs overlaid on Sentinel-2 satellite imagery (Imagery © 2024 European Union/ESA/Copernicus Sentinel-2, processed via Google Earth Engine). The visualizations include reference ground truth delineations (from the field boundary component of CropGSV-Ref) alongside boundary delineation results from the base SAM, Crop Sequence Boundary (CSB) benchmark and the fine-tuned Segment Anything Model (SAM), illustrating typical segmentation performance across diverse field conditions.

4.3 Evaluation of Crop Type Ground Truthing Framework and Benchmark CSB Product

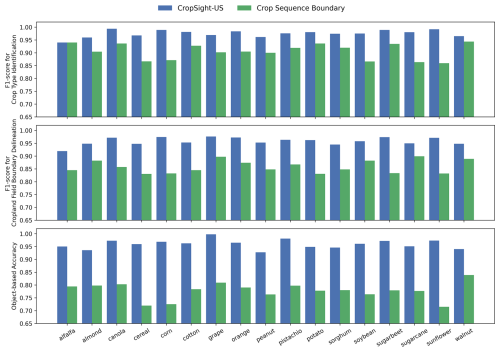

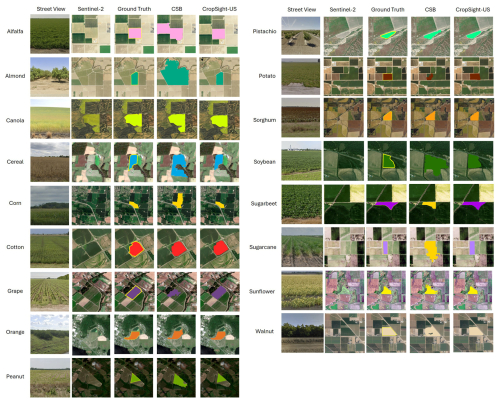

As shown in Fig. 9, the object-based crop type information produced by the crop type ground truthing framework consistently demonstrates superior performance compared to that from the CSB benchmark, across all three evaluation metrics: F1-score for crop type identification, F1-score for cropland field boundary delineation, and object-level accuracy. The framework achieves crop type label F1-scores exceeding 0.95 for the majority of crop types, with improvements over CSB up to 11 %. In terms of boundary delineation, the framework also exhibits a notable advantage, with F1-score gains ranging from 5 %–18 %. These differences reflect the fundamentally distinct crop information retrieval strategies underlying the two systems. The crop type ground truthing framework leverages cropland field-view GSV imagery in combination with satellite observations to generate object-level crop type information, whereas the benchmark CSB product relies on pixel-based classifications derived from multi-year CDL composites, which are then converted into field boundaries through rule-based vectorization to produce the object-based crop type information (Boryan et al., 2011; Abernethy et al., 2023; Hunt et al., 2024). The most substantial differences are observed in object-level accuracy, which integrates both semantic correctness of crop type labels and geometric precision of field boundaries. The crop type ground truthing framework consistently achieves object-level accuracy around 0.95, indicating that approximately 95 % of the delineated objects simultaneously meet both labeling and boundary delineation accuracy criteria. Compared to CSB, the object-level accuracy of the crop type ground truthing framework is improved by 15 % to 25 % across crop types. These improvements are particularly pronounced for cereal, corn, and sunflower, where CSB exhibits relatively low performance in both crop classification and boundary delineation. Overall, the CSB benchmark exhibits large variability in performance across crop types, whereas the crop type ground truthing framework maintains consistently high accuracy. The object-based crop type information generated by our framework more reliably captures both the correct crop type and the corresponding field geometry, in contrast to the CSB benchmark, which often introduces errors in one or both aspects (Fig. 10). This consistency indicates robustness of our framework across diverse cropping systems and underscores the framework's suitability for reliable object-based crop type ground truthing.

Figure 9Object-level comparison between object-based crop type information retrieved using the crop type ground truthing framework and Crop Sequence Boundary (CSB) benchmark, including the F1-score for crop type identification, the F1-score for cropland field boundary delineation, and object-based accuracy.

Figure 10Examples across the 17 crop types showcasing roadside field-view GSV imagery, corresponding Sentinel-2 satellite imagery, object-based crop type ground truth, and object-based crop type information generated by Crop Sequence Boundary (CSB) and our ground-truthing framework developed to produce the CropSight-US dataset. GSV images are from © 2024 Google Maps, and Sentinel-2 imagery thumbnails are from © 2024 European Union/ESA/Copernicus Sentinel-2, processed via Google Earth Engine.

5.1 Operational Cropland Field-view GSV Metadata Collection

We compile all cropland field-view GSV metadata across CONUS using our proposed operational field-view GSV metadata collection method. From billions of GSV records available between 2013 and 2023, this process identifies approximately 1.9 million records, which serve as candidates for constructing the CropSight-US dataset. As shown in Fig. 11a, the spatial distribution of collected cropland field-view GSV metadata varies widely across the CONUS, with high concentrations across diverse agricultural regions such as the California Central Valley, Pacific Northwest, the northern Corn Belt, and the Southeast. The number of available cropland field-view GSV metadata varies substantially across ASDs, ranging from several hundred thousand in some regions to only a few hundred or fewer in others. This variation is likely due to the differences in cropland coverage and the update frequency of GSV across regions. Figure 11b presents the temporal distribution of the collected cropland field-view GSV metadata. The cropland field-view GSV metadata spans from 2013 to 2023, with the majority captured between March and September in alignment with key growth stages of dominant crops in the U.S. Midwest. A substantial increase in cropland field-view GSV metadata availability has been observed in more recent years.

5.2 CropSight-US

From the millions of cropland field-view GSV metadata collected, we generate CropSight-US object-based crop type ground truth dataset, consisting of 124 419 records. These object-based crop type ground truth polygons (crop type ground truth objects) span a wide geographic range across ASDs (Fig. 12) and cover the period from 2013 to 2023 (Table 3). Therefore, CropSight-US could mostly capture conditions representative of typical agricultural field configurations in CONUS. The dataset exhibits considerable variation across both crop types and years. Corn is by far the most prominent crop, with 54 069 ground truth objects, and maintains consistent presence throughout the dataset's time span. Other major crops such as soybean (24 628), cereal (10 210), and alfalfa (8203) also contribute substantially to the dataset. A noticeable drop in collected ground truth is observed in 2017 and 2020. The decline in 2020 is likely due to reduced data collection by pandemic-related restrictions, while the drop in 2017 may be attributed to changes in data collection and release delays, or camera hardware updates (Pina, 2024). In recent years, soybean and almond show upward trends in sample counts, indicating either expanded spatial coverage or evolving sampling priorities for those regions with such crops in the GSV acquisition process. Several less widely cultivated crops, such as canola, sunflower, pistachio, and sugarbeet, remain sparsely represented throughout the entire period. Overall, the dataset captures both extensive temporal and spatial variation while also reflecting class imbalance across crop types and years. These characteristics should be considered carefully in downstream modeling and analysis to ensure robust and generalizable outcomes.

Figure 12Overview and example zoom-in visualizations of the CropSight-US object-based crop type ground truth dataset over the Agricultural Statistics Districts (ASD) from USDA. Satellite base map Imagery © 2026 NASA, accessed via Google Earth Engine.

The monthly distribution of object-based crop type ground truth polygons in the CropSight-US dataset reveals distinct seasonal trends across the 17 dominant crop types in CONUS (Fig. 13). Most row crops (e.g., corn, soybean) reach peak sample availability between June and September, aligning with the core growing season and periods of optimal visibility in street-view imagery. In contrast, crops with more regionally concentrated cultivation, such as almond, orange, grape, and sugarcane peak earlier in the year, typically from February to May, reflecting regional differences in crop phenology and seasonal GSV availability. This temporal coverage across multiple months supports applications requiring seasonally aligned ground truth data for training and validating in-season crop classification models.

Figure 14 provides a comprehensive overview of the spatial, categorical, and temporal distribution of high confidence crop type ground truth objects in the CropSight-US dataset, revealing key patterns in model-based confidence across ASDs (a), crop types (b), years (c), and months (d). Spatially, high-confidence ground truth objects are concentrated in major production areas such as the Corn Belt and California's Central Valley, where consistent cropping systems and field patterns likely simplify classification and improve label reliability. This spatial concentration improves the dataset's utility for model training and validation in key agricultural zones. Categorically, crops with geographically limited distributions, such as grape, orange, almond, sugarcane, and peanuts, tend to exhibit higher proportions of high-confidence ground truths. This likely reflects more uniform environmental conditions and consistent crop characteristics within their localized growing regions. Meanwhile, crops that are more widely distributed across different regions, such as alfalfa, corn, soybean, cotton, sorghum, and cereal, show a lower share of high-confidence ground truths. This is probably due to greater variability in cultivar types, management practices, and regional conditions, which lead to more diverse field appearances and increase classification challenges. Temporally, the CropSight-US dataset maintains extensive coverage of crop type labels from 2013 to 2023, with high-confidence predictions comprising around 30 %–50 % of total labels in most years or across months, which supports reliable year-to-year comparisons, long-term trend analysis, and the training of temporally aware models using quality-assured data. In 2013, the dataset contains the highest number of ground truths, with over 25 000 records. Except for 2017 and 2020, which have limited data, all other years contribute around or more than 10 000 ground truth samples each. In terms of monthly distribution, the data is highly concentrated during the peak growing season (July to September), with over 80 000 ground truths collected during this period. Collectively, the confidence metrics support the filtering or weighting of training samples, enable targeted model improvement, and guide additional data collection efforts to strengthen classification performance across underrepresented or uncertain regions and crop types.

Figure 14Overview of high confidence (confidence being 1) crop type labels in CropSight-US aggregated by (a) ASD (© USDA), (b) Crop Type, (c) Year and (d) Month across CONUS.

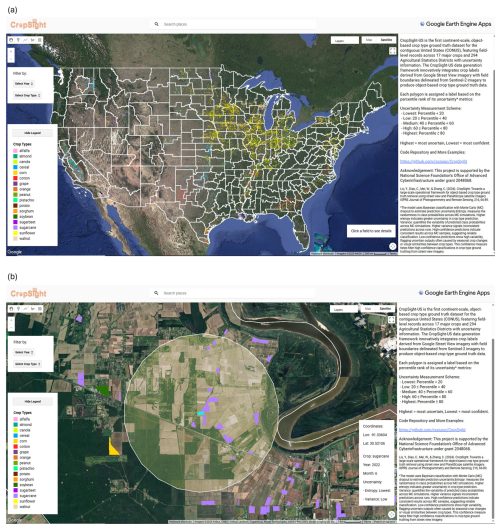

An interactive web-based viewer, hosted on Google Earth Engine (GEE), provides public access to the CropSight-US ground truth dataset and supports visualization of both national-scale crop distributions and field-level observations from 2013 to 2023 (Fig. 15). Each object in the dataset includes the crop type, observation month and year, geographic coordinates, classification uncertainty, and cropland field boundary. The interface allows filtering by year and crop type for user's convenience and further analysis. This tool enhances the dataset's usability for remote sensing research, agricultural monitoring, and geospatial modeling workflows.

Figure 15CropSight-US viewer within the Google Earth Engine application interface. (A) Overview of the complete ground truth dataset for each Agricultural Statistics Districts (ASD) © USDA. (B) An example of zoomed-in view of a sugarcane field with the nearest-year NAIP imagery (Imagery © 2025 NASA, Airbus, CNES/Airbus, Landsat/Copernicus, Maxar Technologies, USDA/FPAC/GEO, Vexcel Imaging US, Inc.) overlay, accessed via Google Earth Engine. The viewer application is available at https://ee-azzhou249.projects.earthengine.app/view/cropsight-us (last access: 15 December 2025).

Our object-based crop type ground truth dataset CropSight-US, including crop types with uncertainty information, their associated crop field boundaries, and metadata, can be accessed via Zenodo: https://doi.org/10.5281/zenodo.15702414 (Zhou et al., 2025). The CropSight-US dataset does not include any GSV imagery or metadata information that can be used to directly trace back to the original GSV. Access to GSV imagery remains subject to the Google Maps Platform Terms of Service and must be obtained independently by users.

A GEE viewer application of the spatiotemporal distribution of the CropSight-US dataset is available at: https://ee-azzhou249.projects.earthengine.app/view/cropsight-us (last access: 19 April 2026).

We introduce CropSight-US, the first national scale, object-based crop type ground truth dataset across the CONUS. Generated through a novel deep learning framework that integrates GSV and Sentinel-2 imagery, the dataset provides high-quality crop type labels precisely aligned with field boundaries. This dataset fills a critical gap by supplying large-scale, reliable reference data to support agricultural remote sensing model training and benchmark operational crop type mapping at the national scale. The underlying crop type ground truth framework also offers a scalable, timely, and resource-efficient alternative to traditional field surveys and visual interpretations, facilitating broader adoption in both research and operational agricultural application settings.