the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

An accurate 10 m annual crop map product of maize and soybean across the United States

Haijun Li

Bernard Adusei

Jeffrey Pickering

Andre Lima

Andrew Poulson

Antoine Baggett

Peter Potapov

Ahmad Khan

Viviana Zalles

Andres Hernandez-Serna

Samuel M. Jantz

Amy H. Pickens

Carolina Ortiz-Dominguez

Xinyuan Li

Theodore Kerr

Zhen Song

Svetlana Turubanova

Eddy Bongwele

Heritier Koy Kondjo

Anna Komarova

Stephen V. Stehman

Matthew C. Hansen

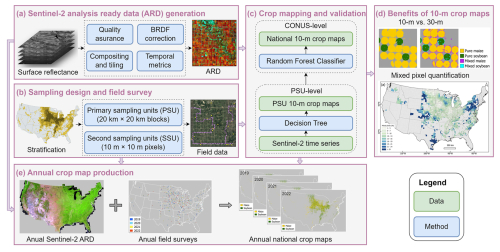

High-resolution crop maps over large spatial extents are fundamental to many agricultural applications; however, generating high-quality crop maps consistently across space and time remains a challenge. In this study, we improved a workflow for crop mapping and developed an openly available, annual, 10 m spatial resolution maize and soybean map product over the Contiguous United States (CONUS) from 2019 to 2022 (available at https://glad.umd.edu/dataset/mapping-crops-10-m-resolution-united-states, last access: 26 December 2025). We obtained all available Sentinel-2 surface reflectance data between May and October for every year, applied quality assurance, corrected the bidirectional reflectance distribution function (BRDF) effects, and generated 10 d analysis ready data (ARD) composites. We then derived multi-temporal metrics from the 10 d ARD as training features for the national-scale wall-to-wall mapping. We implemented a stratified, two-stage cluster sampling, and then conducted annual field surveys and collected ground data. Utilizing the training data with Sentinel-2 multi-temporal metrics and topographic factors, we trained random forest models generalized for annual maize and soybean classification separately. Validated using field data from the two-stage cluster sample, our annual maps achieved consistent overall accuracies (OA) greater than 95 % with standard errors of less than 1 %. User's accuracies (UAs) and producer's accuracies (PAs) for maize were higher than 91 % and 84 % across the years, and UAs and PAs for soybean were greater than 88 % and 82 %, respectively. To illustrate the substantial improvement of the 10 m map over existing datasets, e.g., the 30 m Cropland Data Layer (CDL), we aggregated the 10 m maps to 30 m spatial resolution and quantified the number of mixed pixels that can be reduced by improving the mapping from 30 to 10 m. The counties with the most maize and soybean production in Iowa, Illinois and Nebraska had the lowest reduction in mixed pixels, ranging from 1 % to 7 %, whereas southern counties had a higher reduction in mixed pixels. Overall, the median percentages of mixed maize and soybean pixels reduction across all counties were 8 % and 9 %, respectively. With more Sentinel-2-like data available from continuous observations and incoming satellite missions, we anticipate that 10 m crop maps will greatly benefit long-term monitoring for agricultural practices from the field to global scales. The dataset is also available at https://doi.org/10.6084/m9.figshare.28934993.v2 (Li et al., 2025).

- Article

(30303 KB) - Full-text XML

-

Supplement

(2063 KB) - BibTeX

- EndNote

Satellite-derived crop maps are essential to many agricultural applications, such as crop yield prediction (Bolton and Friedl, 2013; Song et al., 2022; Wang et al., 2024), food market forecasting (Tanaka et al., 2023), crop area estimation (Khan et al., 2016), conservation policy design (Song et al., 2021b; Zalles et al., 2021), smallholder livelihood evaluation (Lambert et al., 2018), warfare impacts on food security (Li et al., 2022; Lin et al., 2023), and greenhouse gas emissions in agriculture (Escobar et al., 2020; Ouyang et al., 2023). However, along with these benefits are the outstanding challenges to generating high-quality crop maps, including developing consistent ready-to-use satellite datasets, collecting representative field data, and building classification algorithms robust to phenological variations.

Dense time series of satellite observations with complete spatial coverage is essential to mapping crops at broad scales. With global coverage and daily revisit frequency, the Moderate Resolution Imaging Spectroradiometer (MODIS) data are often used for crop mapping in early studies (Wardlow et al., 2007; Wardlow and Egbert, 2008). However, spatial details within individual small fields can rarely be depicted at 250 m resolution (Fritz et al., 2015), especially for more than 475 million smallholder and family farms accounting for 12 % of the world's agricultural land (Lowder et al., 2016). Since the opening of the Landsat archive in 2008 (Woodcock et al., 2008), Landsat data have been extensively used to generate 30 m crop maps in many parts of the world, such as in North America (Boryan et al., 2011; Fisette et al., 2013; Johnson and Mueller, 2021; Song et al., 2017; Wang et al., 2020), Europe (Foerster et al., 2012), South America (Song et al., 2021b), and Asia (Dong et al., 2016; Khan et al., 2021; Remelgado et al., 2020). However, Landsat-based crop mapping is hampered by the relatively sparse 16 d temporal frequency (8 d with two satellites), especially when cloudy weather persists. Compared to Landsat, Sentinel-2A and -2B together have a revisit frequency of 5 d and provide 10, 20 and 60 m spectral bands including red edge bands that are particularly useful for crop identification (Immitzer et al., 2016; Song et al., 2021a). These advantages make Sentinel-2 data one of the best publicly accessible data sources for crop mapping (Ghassemi et al., 2022; Han et al., 2021; Luo et al., 2022; You et al., 2021).

Crop classification from satellite imagery is usually implemented by relating specific crop types to remotely sensed features, using reference data and classification algorithms such as conventional machine learning or advanced deep learning (e.g., Alami Machichi et al., 2023; Joshi et al., 2023). Therefore, in situ data can serve as critical references to annotate satellite imagery for supervised classifications, although field surveys over large areas require extensive time and labor resources. Currently, the US Department of Agriculture (USDA) National Agricultural Statistics Service (NASS) collects periodic field data across the US and produces the Cropland Data Layer (CDL) annually based on a large amount of ground data and supervised algorithms (Boryan et al., 2011). When current-year labels are unavailable, some researchers have explored transferring pre-trained models to target regions or years (Luo et al., 2022; Wang et al., 2019), or generating labels with knowledge-guided approaches (Lin et al., 2022; You et al., 2023). However, these approaches are limited to experiments at small spatial scales, such as the US Midwest and Northeast China, and thus the efficiency of national-scale crop classification over large countries with more challenging environments remains to be explored. In cases where reference data are entirely unavailable, unsupervised classifications are used first to cluster satellite-derived features and then assign crop labels to the clusters to generate approximate crop maps (Konduri et al., 2020; Xiong et al., 2017). They, however, are vulnerable to outliers and noisy features and require intensive visual inspections (Wang et al., 2019). In summary, collecting representative ground data is a critical yet challenging component for large-area crop mapping.

Spatiotemporal consistency in crop classifications is necessary to make annual crop maps comparable and thus allow long-term crop monitoring and change analysis. Yet this is undermined partly due to crop phenology variations across large extents, depending on soil properties, planting dates, and weather conditions, among other factors (Deines et al., 2023; Yang et al., 2017). On one hand, within a calendar year, crop progress is regionally different. To address this issue, some studies trained regional models through agroecological zoning, which requires zone-specific training and validation (de Abelleyra et al., 2020; Wardlow and Egbert, 2008). On the other hand, yearly unaligned phenological profiles can jeopardize the classification consistency across years, especially when extreme weather events occur (Manoochehr et al., 2021). Given these interannual variations, classifiers that accurately identify crops in average normal growing seasons using single-date or time-series satellite imagery may perform poorly for abnormal years. To this end, researchers proposed yearly specific classifications (Massey et al., 2017; Som-ard et al., 2022). However, these annual models need fine-tuning based on reference data from each corresponding year especially when encountering unseen growing trends, and thus cannot be generalized for long-term periods.

Multi-temporal metrics are statistical transformations of temporal profiles of satellite observations that can improve spatial and temporal consistency and facilitate land cover mapping for large areas. In the mid-1980s, researchers derived phenological features from pixel time series from the Advanced Very High Resolution Radiometer (AVHRR) for vegetation monitoring (Malingreau, 1986) and from Landsat Multispectral Scanner (MSS) for crop classification (Badhwar, 1984). This metrics method was then widely used for land cover mapping and change analysis from regional to global scales using AVHRR, MODIS and Landsat data (DeFries et al., 1995; Hansen et al., 2013; Potapov et al., 2021b; Song et al., 2018). For crop mapping over continental scales, the metrics method was used to generalize classification models robust to interannual phenological variations (Song et al., 2021b). Many studies are adopting similar concepts for regional crop mapping (Kerner et al., 2022; Konduri et al., 2020; Yang et al., 2023; Zhong et al., 2014).

In the US, the CDL has been used widely for many applications (Bolton and Friedl, 2013; Gao et al., 2017; Lobell et al., 2020; Wright and Wimberly, 2013; Yan and Roy, 2016). Recently, the 2024 CDL has been successfully released with the spatial resolution increased from 30 to 10 m (https://www.nass.usda.gov/Research_and_Science/Cropland/SARS1a.php, last access: 26 December 2025). However, the previous years of CDL are at 30 m resolution, and have inconsistent accuracies depending on the location, and inaccurate classifications are observed in sparse or complex agricultural regions (Larsen et al., 2015). The 30 m spatial resolution can lead to substantial mixed pixels, obscuring incremental or pixel-level changes, particularly along field boundaries. In comparison, 10 m maps with a higher spatial resolution can improve the delineation of precise field boundaries, reducing mixed pixels in individual fields, as well as lowering uncertainties of area estimation. Global 10 m crop mapping efforts are rare, although the WorldCereal provides an example (Van Tricht et al., 2023). In Europe, recent 10 m crop mapping efforts include the Crop Map of England (CROME) (CROME, 2024), the parcel-level crop maps in the Netherlands (ESA, 2024), the crop maps produced by the Sentinel-2 for Agriculture (Sen2-Agri) (Defourny et al., 2019; Inglada et al., 2015), and the more recent High Resolution Layer Crop Types (CTY) (EU, 2024). In Asia, large-area crop-specific maps have been generated recently (Han et al., 2021; Li et al., 2023; Mei et al., 2024). In the US, the potential of national-scale 10 m crop mapping has rarely been explored, although a recent prototyping effort has been reported (Huang et al., 2024).

The objective of this study is to develop annual 10 m crop maps with Sentinel-2 time series. We also quantify the benefits of 10 m maps compared to existing 30 m products. In this study, we generated annual maize and soybean maps at 10 m spatial resolution over the entire Contiguous US (CONUS), from 2019 to 2022. We also quantified the benefits of our 10 m crop maps in mixed pixel reduction compared to 30 m maps, at field, regional and national scales. We improved a workflow developed in previous studies (Li et al., 2023; Song et al., 2017) by combining satellite analysis ready data (ARD) generation, field survey design, and machine learning. An overview workflow for annual crop map production is presented in Fig. 1.

2.1 Satellite analysis ready data (ARD) generation

Operational crop mapping over large areas relies on satellite data that are geometrically and radiometrically consistent with quality assessment (e.g., Boryan et al., 2011; Fisette et al., 2013; Song et al., 2021b). Analysis ready data (ARD), defined by the Committee on Earth Observation Satellites (CEOS), meet such criteria as “have been processed to a minimum set of requirements and organized into a form that allows immediate analysis with a minimum of additional user effort and interoperability both through time and with other datasets” (https://ceos.org/ard/, last access: 11 November 2024). To support annual wall-to-wall crop mapping over the CONUS, we obtained all available Sentinel-2 data between May and October, applied quality assurance, corrected the bidirectional reflectance distribution function (BRDF) effects, and generated 10 d ARD composites.

We downloaded Sentinel-2A and -2B Level-2A Bottom of the Atmosphere reflectance (S2 L2A) images from Google Cloud, including the 10 m blue, green, red and near-infrared (NIR) bands, the 20 m red edge (RE1, RE2, RE3), narrow near-infrared (NNIR) and shortwave infrared (SWIR1, SWIR2) bands. We selected images acquired between 1 May and 31 October after filtering out images with >80 % cloud cover. We then processed all available Sentinel-2 data by utilizing the GLAD and Zaratan high-performance computing clusters at the University of Maryland and generated Sentinel-2 ARD for the wall-to-wall crop mapping. Details of the ARD generation are described in the following sections.

2.1.1 Quality assurance

Based on the S2 scene classification (SCL) layer, we generated the cloud mask by merging categories of cloud shadow, thin cirrus, snow, cloud with low, medium and high probability into cloudy pixels. We also produced an additional cloud mask layer derived from the Fmask algorithms (Zhu et al., 2015) and the Cloud Displacement Index (Frantz et al., 2018). We combined the SCL-derived cloud mask with the additional cloud mask as the final quality assurance (QA) layer.

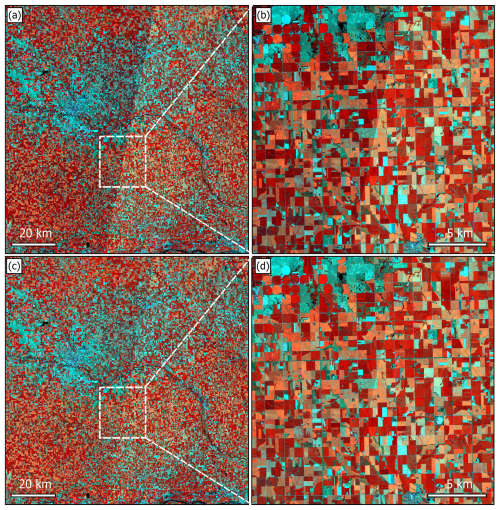

2.1.2 Bidirectional Reflectance Distribution Function (BRDF) correction

We corrected the BRDF effects using the c-factor method to derive nadir BRDF-adjusted reflectance (NBAR) (Roy et al., 2017a, b). The S2 L2A product provides solar and view geometry metadata in 23 × 23 grids at 5 km spatial resolution. For each multi-spectral instrument (MSI) detector in each spectral band, the solar zenith and azimuth angles remain consistent; the view zenith and azimuth angles, however, vary from one detector to another, and from band to band. We calculated the mean value of the view zenith and azimuth angle for each 5 km grid across all detectors and all spectral bands. Per-pixel solar and view angles at 10 m resolution were derived by nearest neighbor interpolation of the 5 km grid values. As a result, the 10 m angle layers were used to generate NBAR images using the global spectral BRDF model parameters (Roy et al., 2017a, b). This process reduced the BRDF effects and improved the spatial coherence compared to the surface reflectance without BRDF correction (Fig. 2).

Figure 2Sentinel-2 false color composites (R: NIR, G: SWIR1, B: SWIR2) over a selected UTM tile 14TMP centered at (97.131° W, 41.942° N). (a–b) Surface reflectance. (c–d) Nadir BRDF-adjusted reflectance (NBAR). Two overlapping Sentinel-2B swaths acquired from orbit R112 on 18 July 2022 (backscattering direction) and orbit R012 on 21 July 2022 (forward scattering direction) were used. The orbit R112 data were overlaid on the orbit R012 data where they overlapped. All composites are displayed with the same stretch parameters. Figure contains modified Copernicus Sentinel data (2022).

2.1.3 Temporal composition and tiling

We resampled the 20 m bands to 10 m using the nearest neighbor method, applied the QA layer, and created 10 d median composites. For each NBAR band in a given 10 d interval, the median value of all clear-sky observations and the corresponding day of the year (DOY) were selected. We also implemented temporal linear interpolation on a per-pixel basis to fill the data gaps (Griffiths et al., 2019). For a missing value in a 10 d interval, the gap-filled value was calculated from the preceding and subsequent valid observations. A maximum of six 10 d intervals (i.e., 60 d or 2 months) was used to limit the period so that the interpolation was temporally relevant. For cases in which cloud-free observations are unavailable for two months, we did not conduct interpolation.

We divided the entire study area into 1° × 1° non-overlapping tiles in geographic latitude/longitude projection with WGS84 datum. Each tile was named by the latitude and longitude coordinates of the lower-left corner, with 0.0001° × 0.0001° spatial resolution to approximately match a 10 m pixel of Sentinel-2. We reprojected 1028 Sentinel-2 Universal Transverse Mercator (UTM) tiles into 939 1° × 1° tiles over the United States.

2.1.4 Multi-temporal metrics

The 10 d S2 ARD may have inconsistent observational frequencies across space and time depending on the geographical location and cloud condition. Generating multi-temporal metrics from ARD can improve data consistency, and thus enable large-area land cover mapping, which has been demonstrated in various applications at continental and global scales (Hansen et al., 2013; Potapov et al., 2021a; Song et al., 2021a).

Following the method in Potapov et al. (2020), we generated multi-temporal metrics from the 10 d S2 ARD (see Table S1 in the Supplement). First, we derived the Normalized Difference Vegetation Index (NDVI, (NIR − Red)(NIR + Red)) (Tucker, 1979) and the normalized ratio between shortwave infrared bands (SWSW, (SWIR1 − SWIR2)(SWIR1 + SWIR2)) from corresponding NBAR bands. Second, we ranked time-series observations by each NBAR band or index individually. We then selected the second maximum, the second minimum, and median values per pixel, and calculated the 10th, 25th, 75th, and 90th percentiles. We also calculated the average, standard deviation, and amplitude between these percentiles and the second maximum, the second minimum values. Third, we ranked the observation day of year (DOYs) according to the time-series NDVI, and derived values on the DOYs corresponding to the second maximum, the second minimum, and median, as well as the 10th, 25th, 75th, and 90th percentiles of NDVI values. The average, standard deviation and amplitude were also calculated from these extracted values.

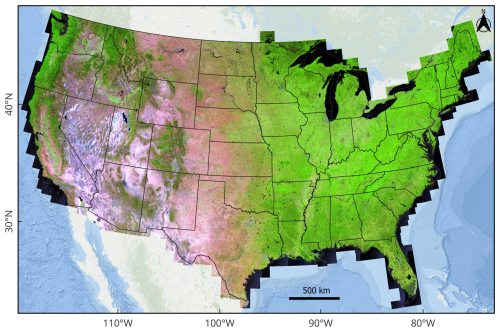

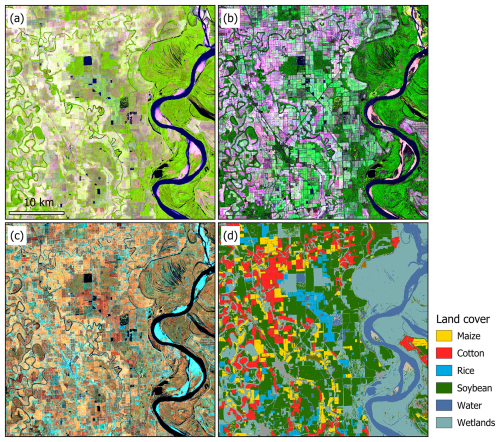

In total, we calculated 621 metrics. The NBAR averages between the 25th and 75th percentiles from observations ranked by individual bands are illustrated in Figs. 3 and 4a. The NBAR amplitudes reveal land surface phenology and thus simplify visual interpretation of general land cover types such as cropland, open water, forest, and wetland (Fig. 4b). When the averages are calculated from observations with the highest NDVI values (between 90th percentile and the second maximum NDVI value), the composite shows surface reflectance during the peak growing season, improving the identification of multiple crop types (Fig. 4c and d).

Figure 3Sentinel-2 composites over the United States in 2022. The composites were created using the average value of nadir Bidirectional Reflectance Distribution Function (BRDF)-adjusted reflectance (NBAR) between the 25th and 75th percentiles from observations ranked by individual bands (R: SWIR1, G: NIR, B: Red). The original 10 m data are resampled to 250 m using the nearest neighbor for visualization purposes. Background base map source: Esri | Powered by Esri.

Figure 4Composites of Sentinel-2 multi-temporal metrics in the Mississippi Valley. (a) SWIR1-NIR-Red composite of NBAR average between the 25th and 75th percentiles from observations ranked by individual bands; (b) SWIR1-NIR-Red composite of NBAR amplitude between the second maximum and the second minimum values; (c) NIR-SWIR1-SWIR2 composites of average NBAR between the 90th percentile and the second maximum values from observations ranked by NDVI; (d) 2022 Cropland Data Layer. The coordinate of the center point is (91.312° W, 33.665° N). All panels are displayed in the same scale at 10 m resolution. Figure contains modified Copernicus Sentinel data (2022).

2.2 Sampling design and field survey

To support the 10 m crop mapping, we conducted extensive field surveys for in situ data collection, based on a two-stage cluster sampling design following Song et al. (2017). This approach has been demonstrated to be effective for agricultural applications in which ground reference data are collected at regional (Khan et al., 2018), national (King et al., 2017; Li et al., 2023), and continental (Song et al., 2021b) scales.

2.2.1 Sampling design

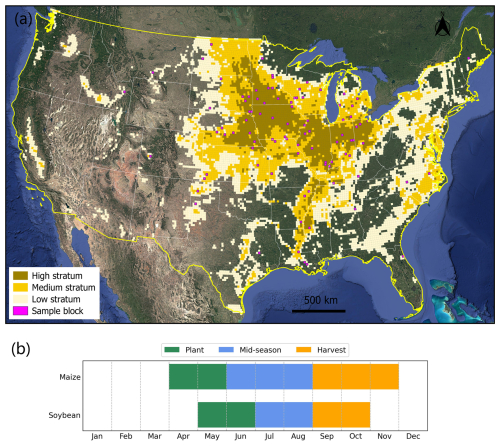

Following previous research, we divided the study area into 20 km × 20 km equal-area blocks and designed the two-stage cluster sampling to target fields to visit. We first derived the per-block maize and soybean area fractions from the previous year's crop map, sorted all blocks from the highest to the lowest fraction, and then stratified the ranked blocks into high, medium and low strata. Following previous studies (King et al., 2017; Song et al., 2017), we selected a simple random sample of blocks from each stratum as the primary sampling units (PSUs) and selected a simple random sample of 10 m × 10 m pixels in each PSU as the secondary sampling units (SSUs) (Fig. 5a) (see Table S2).

Figure 52022 stratified sampling design for field survey. (a) Stratified sampling design. 20 km × 20 km equal-area blocks were stratified into high, medium and low strata. (b) Crop calendar for maize and soybean over the US. Satellite base map is from Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google.

2.2.2 Field data collection

The typical planting season of the US maize starts in April while soybean planting starts in May; the harvesting season starts in September and ends in November for maize and in October for soybean (see Fig. 5b above). We conducted the field survey during the peak growing season in July and August. Consistent with previous research (Li et al., 2023; Song et al., 2021a), we collected two types of datasets during the field survey: (1) ground reference data over the probability sample of SSUs for map evaluation and crop area estimation; and (2) “windshield survey” reference data for model training. These windshield survey data were collected along the driving routes between the SSUs, and were only used to train models for classification and not for validation, whereas the probability sample was exclusively used for validation.

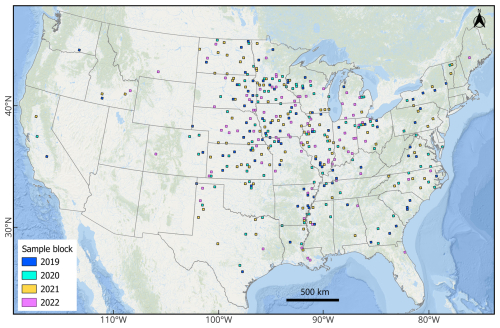

For each year from 2019 to 2022, we selected a separate stratified two-stage cluster sample following the general sampling framework and collected in-season ground data (Fig. 6, Tables S2–S4).

2.3 Crop classification

We conducted crop classifications in two stages: (1) at the PSU level, we mapped maize and soybean over all sample PSUs using field data, Sentinel-2 time-series imagery, and decision tree classifiers; and (2) at the national scale, we employed random forest classifiers to map maize and soybean using the PSU-maps as training, multi-temporal metrics derived from Sentinel-2 ARD as well as the topographic features derived from TanDEM-X (DLR, 2015) as input. We evaluated the accuracy of the national crop map using the field data from the SSUs to determine the reference class label.

2.3.1 PSU-level crop mapping

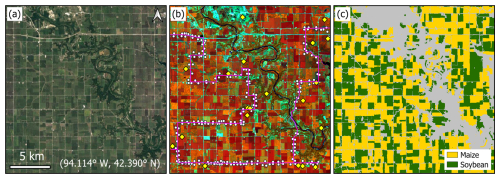

We processed all available Sentinel-2 data over the PSUs from 1 May to 31 October to produce in-season PSU-level maize and soybean maps. For each PSU in each year, we trained two decision tree classifiers separately for maize and soybean classification by using all the bands and normalized ratios of any two bands, as well as the “windshield survey” points as training (Fig. 7b). Applying the trained models to time-series images, we created a binary maize/non-maize map and a binary soybean/non-soybean map at 10 m resolution for each PSU (Fig. 7c). These in-season PSU maps from 2019 to 2022 were then pooled as training labels for national-scale wall-to-wall mapping.

Figure 7An example of primary sampling unit (PSU) block-level crop mapping using field data. (a) A representative sample block in Illinois with center coordinates shown on Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google. (b) Field data collection in the PSU. The secondary sampling units (SSUs) of pixels are shown as yellow diamonds. The “windshield survey” points are shown as white dots. The driving routes are shown in pink tracks. (c) PSU-level crop maps.

2.3.2 Wall-to-wall crop classification

The multi-temporal metrics derived from the Sentinel-2 ARD were the main input for national mapping. In addition, we downloaded the nominal 12 m TanDEM-X data from the German Aerospace Center (DLR, 2015), and derived 10 m spatial resolution elevation, slope, and aspect using nearest neighbor resampling. These topographic data were combined with the multi-temporal metrics (see Sect. 2.1.4 above) as inputs for supervised classification. We generated training labels from the 10 m maize and soybean PSU maps from 2019 to 2022. We randomly selected 0.2 % of maize (soybean) and 0.8 % of non-maize (non-soybean) pixels from each PSU as training labels. Conflict classification pixels from the binary maize and soybean maps were excluded in the training dataset.

To conduct crop classifications, we employed Random Forest (RF), a widely adopted ensemble machine learning algorithm in remote sensing due to its accuracy, computational efficiency, and robustness to noise (Belgiu and Drăguţ, 2016; Breiman, 2001). Following the approach detailed in Li et al. (2023), we tailored RF binary classifiers separately for maize (RF-Maize) and soybean (RF-Soybean), using the pooled training data from 2019 to 2022. The models were fine-tuned using a random search followed by a grid search (Probst et al., 2019), on a randomly selected subset of 1 % of the training dataset, and subsequently re-trained with optimal hyperparameters on the entire training dataset (see more technical details in Figs. S1, S2 and Table S5 in the Supplement).

We aggregated the per-pixel class probability layers from RF-Maize and RF-Soybean by selecting the highest probability (maize vs. soybean) and derived the aggregated probability layer and corresponding crop mask layer (see Fig. S3 for an example). We then applied a 5 × 5 pixel kernel opening followed by a 10 × 10 pixel kernel closing, to eliminate scattered pixels and fill holes within large homogeneous fields. The kernel sizes were selected based on tests and visual assessments to balance noise removal while preserving fine details. We generated the final maize and soybean map using the aggregated probability layer following the area-matching approach reported by Song et al. (2017, 2021b) and Li et al. (2023).

2.4 Map evaluation

2.4.1 Accuracy assessment

Utilizing the annually field-visited SSUs, we validated the annual maps from 2019 to 2022. Overall accuracy (OA), user's accuracy (UA) and producer's accuracy (PA) with associated uncertainty estimates were estimated using a ratio estimator for two-stage cluster sampling within a stratified design, following good practices (Olofsson et al., 2013; Stehman, 2014). The formulas for accuracy estimation are found in Song et al. (2017, Appendix A)

2.4.2 Crop area comparison with official statistics

We derived the pixel-counting-based crop areas for maize and soybean from the annual crop maps, for each year from 2019 to 2022. We compared these crop areas with the official statistical crop areas from the USDA NASS at the county and state levels. We then calculated root-mean-square-difference (RMSD) and r2 between the mapped crop areas and the statistical areas.

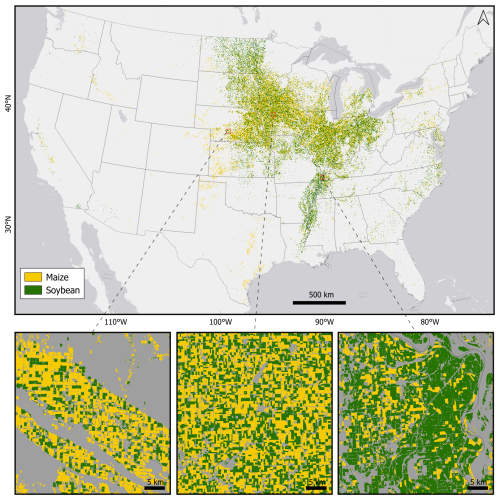

3.1 Visual assessment

Our 10 m crop map reveals well-known spatial patterns of maize and soybean cultivation in the United States (Fig. 8). The dominant soybean cultivation is shown in the Midwest states, the Great Plains states, the Mississippi Valley and the eastern coast, whereas maize is widely distributed across the country.

Figure 8The 10 m maize and soybean map for 2022. Background base map source: Esri | Powered by Esri.

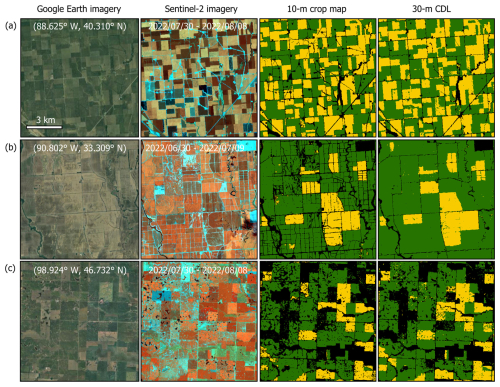

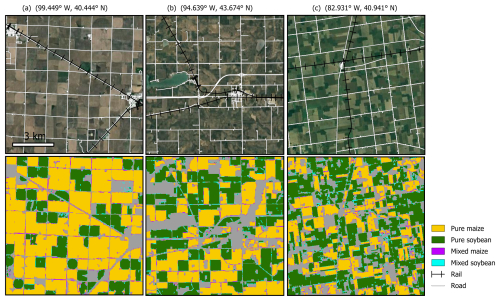

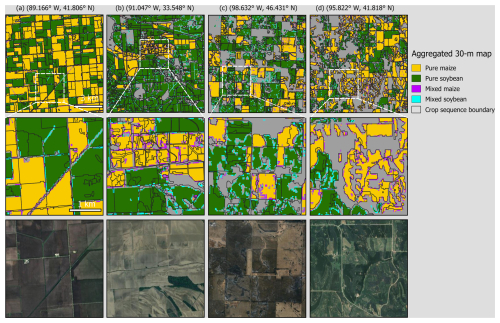

Specifically, our 10 m crop map delineated more field-scale details compared to the 30 m CDL (Fig. 9). Midwest states such as Illinois typically have rectangular crop fields, and our 10 m map generated homogeneous fields with clearer boundaries (Fig. 9a). Our map also captured more landscape fragmentation, such as smaller fields with greater crop diversity in the Mississippi Valley (Fig. 9b) and the agriculture/wetland mosaic in North Dakota (Fig. 9c).

Figure 9Maize and soybean classification in 2022 over selected regions. Rows (a)–(c) are representative sites in Illinois, Mississippi, and North Dakota. All panels are displayed at the same scale (10 km × 10 km). The coordinates of the center points are shown on Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google. The 10 d composite periods are shown on the Sentinenl-2 image (R: NIR, G: SWIR1, B: SWIR2). Maize and soybean are shown in yellow and green colors, respectively.

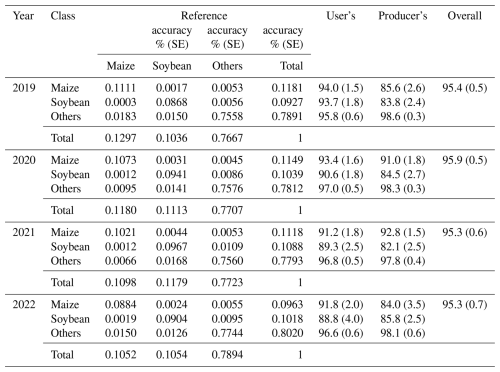

3.2 Quantitative accuracy assessment

We conducted an accuracy assessment for annul maps using the annual SSUs as references (Table 1). All maps achieved OAs greater than 95 % with standard errors less than 1 %. UAs and PAs for maize were higher than 91 % and 84 %, respectively, while UAs and PAs for soybean were higher than 89 % and 82 %, respectively.

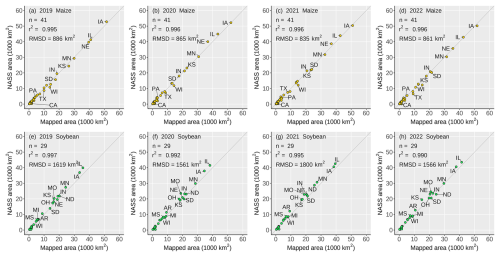

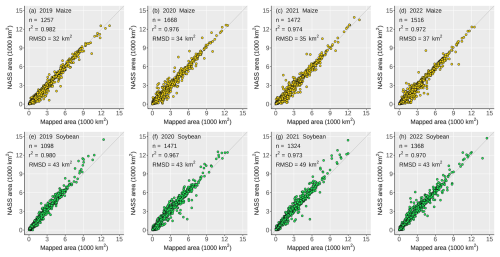

3.3 Comparison between the crop maps and agricultural statistics

We compared our map-based area estimates with agricultural statistics reported by the NASS at state and county scales. The state-level area comparisons between our mapped areas and the NASS statistics showed close agreements, with r2 greater than 0.99 and root-mean-square-difference (RMSDs) less than 900 km2 for maize, and RMSDs less than 1800 km2 for soybean (Fig. 10). At the county level (Fig. 11), our mapped maize and soybean areas also matched the NASS statistics well with r2 greater than 0.97 and RMSDs between 30 and 50 km2.

4.1 The benefits of 10 m crop maps in mixed pixel reduction

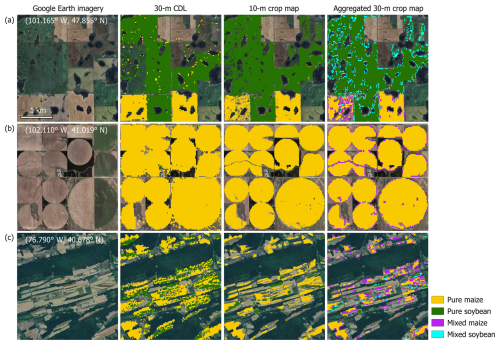

Using the 2022 10 m crop map as an example, we conducted a quantitative data analysis to illustrate the benefits of 10 m crop mapping over 30 m mapping. We first removed small fields less than nine 10 m pixels (one 30 m pixel), then spatially aggregated the 10 m map to 30 m resolution and derived the maize and soybean cover fraction for each 30 m pixel. We defined pure pixels as 100 % cover and anything below as mixed pixels. We applied a 50 % cover threshold to determine the dominant crop type within mixed pixels. Pixels where neither maize nor soybean cover reached 50 % were ignored. Rather than assessing accuracies for the aggregated 30 m maps, our objective was to compare the 10 m versus 30 m resolution by quantifying changes in mixed pixels and analyzing the spatial patterns.

Unsurprisingly, the aggregated 30 m map showed that pure pixels are clustered in large-size homogeneous fields (Fig. 12a). Mixed pixels occurred in small, fragmented fields, on field edges, or along the road networks, where crops coexisted with other land cover (e.g., other crops, pasture, built-up, etc.) (Fig. 12b, c). Our 10 m maps showed clear advantages over the 30 m CDL in mixed pixel reduction in various landscapes (Fig. 13). In North Dakota where numerous fields are fragmented, the 10 m map presents more homogeneous fields and captures within-field patterns of water ponds (Fig. 13a); for center-pivot irrigated fields in Nebraska, the 10 m map delineates cleaner circular patterns (Fig. 13b); in Appalachian Pennsylvania where many fields are in narrow strips, the 10 m map distinguishes neighboring strip cropping fields better than the 30 m CDL in which the fields are mapped with a large amount of mixed pixels (Fig. 13c).

Figure 12The 2022 aggregated 30 m maize and soybean map overlaid with road and rail networks. Columns (a)–(c) are selected sites in Nebraska, Minnesota, and Ohio. The 30 m map was derived by spatially aggregating the 10 m map by calculating the fractional cover and categorized as pure pixels with 100 % cover or mixed pixels with <100 % cover. All panels are displayed at the same scale (10 km × 10 km). The coordinates of the center points are shown on Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google. The rail and road networks are obtained from the US TIGER database.

Figure 13The 2022 aggregated 30 m maize and soybean map and CDL show mixed pixels in various landscapes. (a) Wetland/agriculture mosaics in North Dakota; (b) center-pivot irrigated fields in Nebraska; (c) strip fields in Pennsylvania. The coordinates of the center points are shown on Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google.

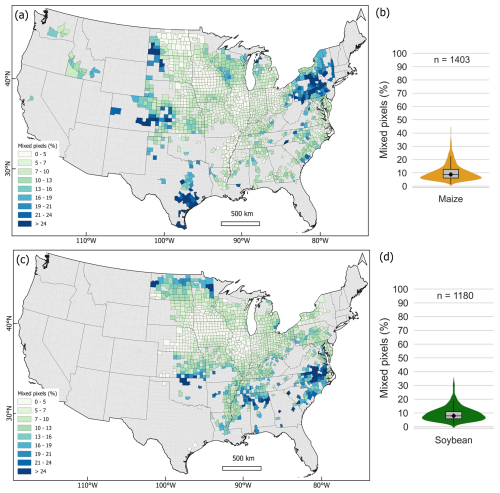

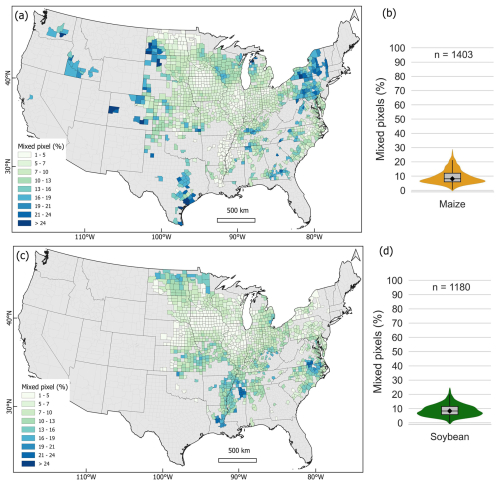

We obtained the percentage of mixed maize and soybean pixels at the county level to examine the spatial distribution of mixed pixel reduction from 30 to 10 m (Fig. 14). The counties with the highest maize and soybean production, such as those in Iowa, Illinois, and Nebraska, had the least mixed pixel percentages ranging from 1 % to 10 %, while counties in the upper Midwest, the North and South Plains, the northeast and eastern coast had more mixed pixels (Fig. 14a, c). Overall, the median percentages of mixed maize and soybean pixels in all counties were 8 % and 9 %, respectively (Fig. 14b, d). Our results show that increasing the spatial resolution of crop mapping from 30 to 10 m would reduce the number of mixed pixels by 8 %–9 % at the county scale, and substantially benefit many states outside of the Midwest region.

Figure 14The percentages of 30 m mixed maize and soybean pixels at the county level derived from the 10 m map. (a) The spatial distribution of mixed maize pixels; (b) the statistical distribution of mixed maize pixels; (c–d) the same as (a)–(b) but for soybean. Counties accounting for 99.9 % coverage of the national maize and soybean cultivation derived from the 2022 NASS statistics are shown.

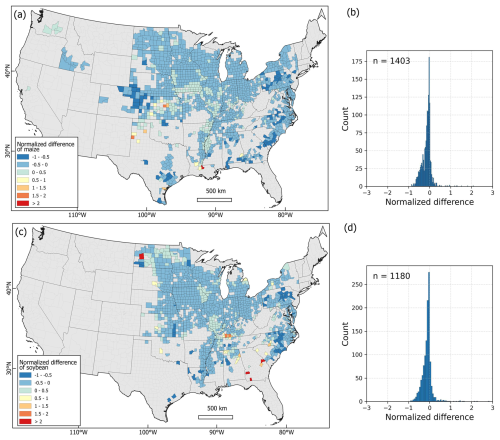

We conducted additional analysis by comparing our aggregated 30 m map with the 30 m CDL for the maize and soybean classes. We applied a 50 % threshold to convert our aggregated 30 m map to binary maize and soybean maps and conducted a per-pixel comparison. We then aggregated the per-pixel results to the county level based on the ratio of the difference of maize or soybean pixels between our map and CDL divided by maize or soybean pixels in CDL. Positive values indicate more maize or soybean pixels in our map, whereas negative values indicate more pixels in CDL (Fig. 15). In several regions, our map presented more maize pixels, such as in northern Texas and southern Louisiana (Fig. 15a), and more soybean pixels, such as in western North Dakota and Georgia (Fig. 15c). In general, CDL reported more maize and soybean pixels in most counties compared to our aggregated 30 m map (Fig. 15b, d), which is likely due to the exclusion of 10 m maize and soybean pixels below the 50 % threshold during aggregation.

Figure 15Comparison between our aggregated 30 m map with the 2022 30 m CDL at the county level. (a) Normalized difference of maize pixel; (b) histogram of the normalized difference of maize pixel; (c–d) the same as (a)–(b) but for soybean. Counties accounting for 99.9 % coverage of the national maize and soybean cultivation derived from the 2022 NASS statistics are shown.

To estimate the number of mixed pixels that might be reduced by improving the CDL's spatial resolution from 30 to 10 m, we overlaid the 2022 30 m CDL on our 30 m fractional cover map, and computed the number and proportion of CDL maize and soybean pixels that were mixed pixels at the county level (Fig. 16). The mixed pixel derived from CDL showed similar spatial distribution patterns to results derived from the aggregated 30 m map (see Fig. 14 above). The median percentage of mixed pixels across all counties for both maize and soybean was 8 % (Fig. 16b, d), which is close to the values of 8 % (9 % for soybean) derived from our aggregated 30 m map (see Fig. 14 above). These consistent mixed pixel estimates from our map and the CDL indicate substantial benefits of 10 m crop maps in reducing mixed pixels over existing 30 m products, especially for regions outside of Midwest, as illustrated in our analyses.

Figure 16The percentages of 30 m mixed maize and soybean pixels at the county level by comparing our aggregated 30 m map and the 2022 30 m CDL. (a) The spatial distribution of mixed maize pixels; (b) the statistical distribution of mixed maize pixels; (c–d) the same as (a)–(b) but for soybean. Counties accounting for 99.9 % coverage of the national maize and soybean cultivation derived from the 2022 NASS statistics are shown.

4.2 The potential of 10 m crop maps in finer-scale agricultural monitoring

Higher-resolution crop maps have great potential to facilitate remote-sensing-based agricultural applications at finer scales. For example, the Crop Sequence Boundaries (CSB), which delineate polygons of homogeneous cropping sequences with 8-year moving windows, have been developed based on the CDL by the USDA (Hunt et al., 2023). The 30 m CDL was resampled to 10 m resolution to improve the masking of road networks as the roads and rails in rural areas are typically less than 30 m in width. Consequently, the resampled 10 m maps may delineate inaccurate field boundaries due to mixed pixels (Fig. 17). The CSB delineated large homogeneous fields well (Fig. 17a) but showed more fragments when encountering within-field cropping variations (Fig. 17b). The misalignments between the delineated field edges and pixel boundaries are extensive in heterogeneous landscapes and small fields (Fig. 17c, d), and thus the polygon-based crop acreage derived from CSB layers may be biased. Alternatively, using originally produced higher-resolution (e.g., 10 m) maps can yield more accurate field delineation, cropping sequences, and crop area estimates with smaller uncertainties (Duveiller and Defourny, 2010; Ozdogan and Woodcock, 2006; Yan and Roy, 2014).

Figure 17The aggregated 30 m maize and soybean map overlaid with Crop Sequence Boundaries. Columns (a)–(d) are selected sites in Illinois, Mississippi, North Dakota, and Iowa. All panels are displayed at the same scale (10 km × 10 km). The coordinates of the center points are shown. The 2015–2022 Crop Sequence Boundaries are obtained from the USDA NASS. Satellite base map is from Imagery © 2025 Landsat/Copernicus, Map data © 2025 Google.

With higher-resolution satellite imagery available from continuous observations (e.g., Sentinel-1 and Sentinel-2) and upcoming missions (e.g., Landsat Next, NASA-ISRO SAR Mission (NISAR)), we anticipate that 10 m crop maps will play a more critical role in agricultural monitoring from the field to global scales.

4.3 The robustness of temporal metrics for annual crop map production

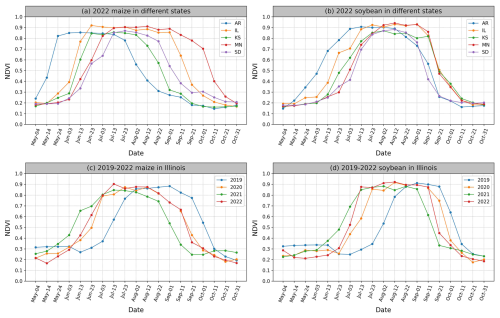

Stacking satellite-derived time-series maps is one of the most common practices to investigate long-term agriculture-related land cover and land use change, such as cropping history (Blickensdörfer et al., 2022; Johnson, 2019), crop and cropland expansion (Lark et al., 2020; Potapov et al., 2021a; Song et al., 2021b; Zalles et al., 2019), and cropland intensification (Kehoe et al., 2017; Marin et al., 2022). However, in large-extent countries such as the US, the spatiotemporal consistency in multi-year crop classifications can be impacted by both intra-annual and interannual variations in crop phenology (Fig. 18). For example, the 2022 NDVI time series for maize and soybean showed noticeably different crop progress across the CONUS (Fig. 18a, b). In Arkansas, maize growth peaked in mid-May and started senescence in early August, whereas maize in the Midwest states was at early growing stages in mid-May and at the peak growing season in early August. Soybean also showed noticeable disparities in crop progress across the US states. On the other hand, interannual phenological shifts also impede the classification consistency (Fig. 18c, d). In Illinois, similar NDVI profiles between 2020 and 2022 suggested overall consistent growing progress, while the patterns in 2019 and 2021 showed higher interannual variations. In 2021, Illinois experienced an earlier planting pace for maize and soybean partly due to the favorable spring weather conditions and soybean varieties adapted to early plantation (Nafziger, 2024). In 2019, crop phenology shifted substantially as a result of planting delays caused by extremely heavy precipitation in the spring (Manoochehr et al., 2021). Consequently, at the state level in Illinois, maize was planted at only 24 % compared to the previous year's 95 % and the five-year average of 49 % by the end of May 2019; soybean was planted at 9 % compared to the previous year's 79 % and the five-year average of 51 % (NASS CPR, 2024).

Figure 18NDVI time series for maize and soybean from representative sites. (a) 2022 maize NDVI in Arkansas (AR), Illinois (IL), Kansas (KS), Minnesota (MN), South Dakota (SD); (b) the same as (a) but for soybean; (c) 2019–2022 interannual NDVI variations for maize in Illinois; (d) the same as (c) but for soybean. The details about the sites are shown in Fig. S4 and Table S6.

Utilizing the multi-temporal metrics to relatively normalize crop phenological variations, our approach can be applied to generate annual crop maps over large areas, as also illustrated for South America in Song et al. (2021b). Our four-year sampling designs generated large field samples, allowing us to collect representative training data from various growing conditions and geographical regions. Our workflow generated consistently accurate maize and soybean maps over the entire CONUS, from 2019 to 2022. The map accuracies for 2019 (an abnormally wet year), and 2020 and 2021 (both years with normal weather) are consistent with those of 2022 (see Table 1 above).

The annual 10 m maize and soybean maps over the CONUS from 2019 to 2022 are openly accessible at the website of the Global Land Analysis and Discovery (GLAD) team at the University of Maryland (https://glad.umd.edu/dataset/mapping-crops-10-m-resolution-united-states, last access: 26 December 2025). The dataset is also available at https://doi.org/10.6084/m9.figshare.28934993.v2 (Li et al., 2025). The dataset includes a set of GeoTIFF images in the ESPG:4236 spatial reference system. The values 0, 1, 2, 255 represent other, maize, soybean, and no data, respectively. External data used in this study are openly accessible online: (1) the Sentinel-2 data were downloaded from Google Cloud Platform (https://console.cloud.google.com/marketplace/product/esa-public-data/sentinel2?project=s2data Google Cloud Console, 2025); (2) the Cropland Data Layer were downloaded from the US Department of Agriculture (USDA) National Agricultural Statistics Service (NASS) (https://www.nass.usda.gov/Research_and_Science/Cropland/Release/index.php, NASS CDL, 2025; (3) the TanDEM-X was downloaded from the German Aerospace Center (https://tandemx-science.dlr.de/, DLR, 2015); (4) the agricultural statistics for CONUS were retrieved from the USDA NASS (https://www.nass.usda.gov/Quick_Stats/, USDA NASS, 2025); (5) the Crop Sequence Boundaries were derived from the USDA NASS (https://www.nass.usda.gov/Research_and_Science/Crop-Sequence-Boundaries/index.php, NASS CSB, 2024); (6) the road and rail networks were downloaded from the US TIGER database (https://www.census.gov/geographies/mapping-files/time-series/geo/tiger-line-file.html, US Census Bureau, 2025).

Crop maps at 10 m spatial resolution bring substantial benefits for agricultural applications compared to 30 m products for smallholder as well as industrial agricultural countries. In this study, we developed 10 m maize and soybean maps over the Contiguous US (CONUS) from 2019 to 2022, using all available Sentinel-2 observations and field surveys, with overall accuracies consistently greater than 95 %. We explicitly examined the benefits of improving the spatial resolution from 30 to 10 m by quantifying the reduction in mixed pixels. Our analysis showed that, across all counties in the US, the 10 m maps reduced mixed pixels by a median of 8 % for maize and 9 % for soybean compared to the aggregated 30 m maps, with most mixed pixels occurring along field edges, road networks, and in heterogeneous fields. Our workflow generated annual maps with consistency across space and over time. Our 10 m crop maps were produced at the end of the growing season, around 3–4 months earlier than the official 30 m Cropland Data Layer. As more Sentinel-2-like data become accessible from current observations and planned missions such as Landsat Next, 10 m crop maps presented in this study will greatly benefit agricultural applications, including field boundary extraction, crop sequence delineation, crop condition monitoring, precision fertilization and irrigation, from field to global scales.

The supplement related to this article is available online at https://doi.org/10.5194/essd-18-2227-2026-supplement.

HL: Software, Formal analysis, Investigation, Writing – Original Draft, Writing – Review & Editing, Visualization. XPS: Conceptualization, Methodology, Software, Formal analysis, Investigation, Writing – Original Draft, Writing – Review & Editing, Supervision, Project administration, Resources, Funding acquisition. BA: Formal analysis, Investigation. JP: Formal analysis, Investigation, Data Curation. AL: Formal analysis, Investigation. AP: Formal analysis, Investigation, Data Curation, Writing – Review & Editing. AB: Investigation. PP: Methodology, Investigation, Software, Writing – Review & Editing. AK: Methodology, Investigation, Writing – Review & Editing. VZ: Methodology, Investigation. AHS: Investigation. SMJ: Investigation, Writing – Review & Editing. AHP: Investigation. COD: Investigation. XL: Investigation, Writing – Review & Editing. TK: Investigation, Writing – Review & Editing. ZS: Investigation. ST: Investigation. EB: Investigation. HKK: Investigation. AK: Investigation. SVS: Methodology, Writing – Review & Editing. MCH: Conceptualization, Methodology, Resources, Investigation, Funding Acquisition.

The contact author has declared that none of the authors has any competing interests.

Publisher's note: Copernicus Publications remains neutral with regard to jurisdictional claims made in the text, published maps, institutional affiliations, or any other geographical representation in this paper. The authors bear the ultimate responsibility for providing appropriate place names. Views expressed in the text are those of the authors and do not necessarily reflect the views of the publisher.

The authors acknowledge the University of Maryland supercomputing resources (http://hpcc.umd.edu, last access: 26 December 2025) made available for conducting the research reported in this paper.

This research is supported by the Google Academic Research Awards, NASA Land-Cover and Land-Use Change Program (80NSSC24K0188, 80NSSC23K0526), NASA Acres Program (80NSSC23M0034), Bezos Earth Fund, and the University of Maryland.

This paper was edited by Peng Zhu and reviewed by four anonymous referees.

Alami Machichi, M., mansouri, loubna E., imani, yasmina, Bourja, O., Lahlou, O., Zennayi, Y., Bourzeix, F., Hanadé Houmma, I., and Hadria, R.: Crop mapping using supervised machine learning and deep learning: a systematic literature review, Int. J. Remote Sens., 44, 2717–2753, https://doi.org/10.1080/01431161.2023.2205984, 2023.

Badhwar, G. D.: Classification of corn and soybeans using multitemporal thematic mapper data, Remote Sens. Environ., 16, 175–181, https://doi.org/10.1016/0034-4257(84)90061-0, 1984.

Belgiu, M. and Drăguţ, L.: Random forest in remote sensing: A review of applications and future directions, ISPRS J. Photogramm., 114, 24–31, https://doi.org/10.1016/j.isprsjprs.2016.01.011, 2016.

Blickensdörfer, L., Schwieder, M., Pflugmacher, D., Nendel, C., Erasmi, S., and Hostert, P.: Mapping of crop types and crop sequences with combined time series of Sentinel-1, Sentinel-2 and Landsat 8 data for Germany, Remote Sens. Environ., 269, 112831, https://doi.org/10.1016/j.rse.2021.112831, 2022.

Bolton, D. K. and Friedl, M. A.: Forecasting crop yield using remotely sensed vegetation indices and crop phenology metrics, Agr. Forest Meteorol., 173, 74–84, https://doi.org/10.1016/j.agrformet.2013.01.007, 2013.

Boryan, C., Yang, Z., Mueller, R., and Craig, M.: Monitoring US agriculture: the US Department of Agriculture, National Agricultural Statistics Service, Cropland Data Layer Program, Geocarto Int., 26, 341–358, https://doi.org/10.1080/10106049.2011.562309, 2011.

Breiman, L.: Random forests, Mach. Learn., 45, 5–32, https://doi.org/10.1023/a:1010933404324, 2001.

CROME: Crop Map of England, https://environment.data.gov.uk/dataset/cc389fe9-f026-4b20-a80f-f424ee833ea6, last access: 28 May 2024.

de Abelleyra, D., Veron, S., Banchero, S., Mosciaro, M. J., Propato, T., Ferraina, A., Taffarel, M. C. G., Dacunto, L., Franzoni, A., and Volante, J.: First large extent and high resolution cropland and crop type map of Argentina, in: 2020 IEEE Latin American GRSS & ISPRS Remote Sensing Conference (LAGIRS), IEEE, 392–396, https://doi.org/10.1109/LAGIRS48042.2020.9165610, 2020.

Defourny, P., Bontemps, S., Bellemans, N., Cara, C., Dedieu, G., Guzzonato, E., Hagolle, O., Inglada, J., Nicola, L., Rabaute, T., Savinaud, M., Udroiu, C., Valero, S., Bégué, A., Dejoux, J.-F., El Harti, A., Ezzahar, J., Kussul, N., Labbassi, K., Lebourgeois, V., Miao, Z., Newby, T., Nyamugama, A., Salh, N., Shelestov, A., Simonneaux, V., Traore, P. S., Traore, S. S., and Koetz, B.: Near real-time agriculture monitoring at national scale at parcel resolution: Performance assessment of the Sen2-Agri automated system in various cropping systems around the world, Remote Sens. Environ., 221, 551–568, https://doi.org/10.1016/j.rse.2018.11.007, 2019.

DeFries, R., Hansen, M., and Townshend, J.: Global discrimination of land cover types from metrics derived from AVHRR pathfinder data, Remote Sens. Environ., 54, 209–222, https://doi.org/10.1016/0034-4257(95)00142-5, 1995.

Deines, J. M., Swatantran, A., Ye, D., Myers, B., Archontoulis, S., and Lobell, D. B.: Field-scale dynamics of planting dates in the US Corn Belt from 2000 to 2020, Remote Sens. Environ., 291, https://doi.org/10.1016/j.rse.2023.113551, 2023.

DLR: TanDEM-X – Digital Elevation Model (DEM) – Global, 12 m, https://tandemx-science.dlr.de/ (last access: 26 December 2025), 2015.

Dong, J., Xiao, X., Menarguez, M. A., Zhang, G., Qin, Y., Thau, D., Biradar, C., and Moore, B.: Mapping paddy rice planting area in northeastern Asia with Landsat 8 images, phenology-based algorithm and Google Earth Engine, Remote Sens. Environ., 185, 142–154, https://doi.org/10.1016/j.rse.2016.02.016, 2016.

Duveiller, G. and Defourny, P.: A conceptual framework to define the spatial resolution requirements for agricultural monitoring using remote sensing, Remote Sens. Environ., 114, https://doi.org/10.1016/j.rse.2010.06.001, 2010.

ESA: Monitoring crop health across the Netherlands, https://www.esa.int/Applications/Observing_the_Earth/Copernicus/Sentinel-1/Monitoring_crop_health_across_the_Netherlands, last access: 20 June 2024.

Escobar, N., Tizado, E. J., zu Ermgassen, E. K. H. J., Löfgren, P., Börner, J., and Godar, J.: Spatially-explicit footprints of agricultural commodities: Mapping carbon emissions embodied in Brazil's soy exports, Global Environ. Chang., 62, 102067, https://doi.org/10.1016/j.gloenvcha.2020.102067, 2020.

EU: The High Resolution Layer Crop Types (CTY), EEA geospatial data catalogue, https://sdi.eea.europa.eu/catalogue/srv/api/records/9db29b07-5968-4ce0-8351-1e356b3d7d47 (last access: 21 November 2025), 2024.

Fisette, T., Rollin, P., Aly, Z., Campbell, L., Daneshfar, B., Filyer, P., Smith, A., Davidson, A., Shang, J., and Jarvis, I.: AAFC annual crop inventory, 2013 Second International Conference on Agro-Geoinformatics (Agro-Geoinformatics), IEEE, 270–274, https://doi.org/10.1109/Argo-Geoinformatics.2013.6621920, 2013.

Foerster, S., Kaden, K., Foerster, M., and Itzerott, S.: Crop type mapping using spectral–temporal profiles and phenological information, Comput. Electron. Agr., 89, 30–40, https://doi.org/10.1016/j.compag.2012.07.015, 2012.

Frantz, D., Haß, E., Uhl, A., Stoffels, J., and Hill, J.: Improvement of the Fmask algorithm for Sentinel-2 images: Separating clouds from bright surfaces based on parallax effects, Remote Sens. Environ., 215, 471–481, https://doi.org/10.1016/j.rse.2018.04.046, 2018.

Fritz, S., See, L., McCallum, I., You, L., Bun, A., Moltchanova, E., Duerauer, M., Albrecht, F., Schill, C., Perger, C., Havlik, P., Mosnier, A., Thornton, P., Wood-Sichra, U., Herrero, M., Becker-Reshef, I., Justice, C., Hansen, M., Gong, P., Abdel Aziz, S., Cipriani, A., Cumani, R., Cecchi, G., Conchedda, G., Ferreira, S., Gomez, A., Haffani, M., Kayitakire, F., Malanding, J., Mueller, R., Newby, T., Nonguierma, A., Olusegun, A., Ortner, S., Rajak, D. R., Rocha, J., Schepaschenko, D., Schepaschenko, M., Terekhov, A., Tiangwa, A., Vancutsem, C., Vintrou, E., Wenbin, W., van der Velde, M., Dunwoody, A., Kraxner, F., and Obersteiner, M.: Mapping global cropland and field size, Glob. Change Biol., 21, 1980–1992, https://doi.org/10.1111/gcb.12838, 2015.

Gao, F., Anderson, M. C., Zhang, X., Yang, Z., Alfieri, J. G., Kustas, W. P., Mueller, R., Johnson, D. M., and Prueger, J. H.: Toward mapping crop progress at field scales through fusion of Landsat and MODIS imagery, Remote Sens. Environ., 188, 9–25, https://doi.org/10.1016/j.rse.2016.11.004, 2017.

Ghassemi, B., Dujakovic, A., Żółtak, M., Immitzer, M., Atzberger, C., and Vuolo, F.: Designing a European-wide crop type mapping approach based on machine learning algorithms using LUCAS field survey and Sentinel-2 data, Remote Sens., 14, https://doi.org/10.3390/rs14030541, 2022.

Google Cloud Console: Sentinel-2, https://console.cloud.google.com/marketplace/product/esa-public-data/sentinel2?project=s2data (last access: 26 December 2025), 2025.

Griffiths, P., Nendel, C., and Hostert, P.: Intra-annual reflectance composites from Sentinel-2 and Landsat for national-scale crop and land cover mapping, Remote Sens. Environ., 220, 135–151, https://doi.org/10.1016/j.rse.2018.10.031, 2019.

Han, J., Zhang, Z., Luo, Y., Cao, J., Zhang, L., Zhang, J., and Li, Z.: The RapeseedMap10 database: annual maps of rapeseed at a spatial resolution of 10 m based on multi-source data, Earth Syst. Sci. Data, 13, 2857–2874, https://doi.org/10.5194/essd-13-2857-2021, 2021.

Hansen, M. C., Potapov, P. V., Moore, R., Hancher, M., Turubanova, S. A., Tyukavina, A., Thau, D., Stehman, S. V., Goetz, S. J., Loveland, T. R., Kommareddy, A., Egorov, A., Chini, L., Justice, C. O., and Townshend, J. R.: High-resolution global maps of 21st-century forest cover change, Science, 342, 850–853, https://doi.org/10.1126/science.1244693, 2013.

Huang, Y., Qiu, B., Yang, P., Wu, W., Chen, X., Zhu, X., Xu, S., Wang, L., Dong, Z., Zhang, J., Berry, J., Tang, Z., Tan, J., Duan, D., Peng, Y., Lin, D., Cheng, F., Liang, J., Huang, H., and Chen, C.: National-scale 10 m annual maize maps for China and the contiguous United States using a robust index from Sentinel-2 time series, Comput. Electron. Agr., 221, 109018, https://doi.org/10.1016/j.compag.2024.109018, 2024.

Hunt, K. A., Abernethy, J., Bowman, M., Wallander, S., and Williams, R.: Crop Sequence Boundaries (CSB): Delineated fields using remotely sensed crop rotations, USDA-NASS, Washington, D.C., USA, https://www.nass.usda.gov/Research_and_Science/Crop-Sequence-Boundaries/index.php (last access: 24 March 2026), 2023.

Immitzer, M., Vuolo, F., and Atzberger, C.: First experience with Sentinel-2 data for crop and tree species classifications in Central Europe, Remote Sens., 8, 166, https://doi.org/10.3390/rs8030166, 2016.

Inglada, J., Arias, M., Tardy, B., Hagolle, O., Valero, S., Morin, D., Dedieu, G., Sepulcre, G., Bontemps, S., Defourny, P., and Koetz, B.: Assessment of an operational system for crop type map production using high temporal and spatial resolution satellite optical imagery, Remote Sens., 7, 12356–12379, https://doi.org/10.3390/rs70912356, 2015.

Johnson, D. M.: Using the Landsat archive to map crop cover history across the United States, Remote Sens. Environ., 232, 111286, https://doi.org/10.1016/j.rse.2019.111286, 2019.

Johnson, D. M. and Mueller, R.: Pre- and within-season crop type classification trained with archival land cover information, Remote Sens. Environ., 264, https://doi.org/10.1016/j.rse.2021.112576, 2021.

Joshi, A., Pradhan, B., Gite, S., and Chakraborty, S.: Remote-sensing data and deep-learning techniques in crop mapping and yield prediction: A systematic review, Remote Sens., 15, 2014, https://doi.org/10.3390/rs15082014, 2023.

Kehoe, L., Romero-Munoz, A., Polaina, E., Estes, L., Kreft, H., and Kuemmerle, T.: Biodiversity at risk under future cropland expansion and intensification, Nature Ecology & Evolution, 1, 1129–1135, https://doi.org/10.1038/s41559-017-0234-3, 2017.

Kerner, H. R., Sahajpal, R., Pai, D. B., Skakun, S., Puricelli, E., Hosseini, M., Meyer, S., and Becker-Reshef, I.: Phenological normalization can improve in-season classification of maize and soybean: A case study in the central US Corn Belt, Sci. Remote Sens., https://doi.org/10.1016/j.srs.2022.100059, 2022.

Khan, A., Hansen, M. C., Potapov, P., Stehman, S. V., and Chatta, A. A.: Landsat-based wheat mapping in the heterogeneous cropping system of Punjab, Pakistan, Int. J. Remote Sens., 37, 1391–1410, https://doi.org/10.1080/01431161.2016.1151572, 2016.

Khan, A., Hansen, M., Potapov, P., Adusei, B., Pickens, A., Krylov, A., and Stehman, S.: Evaluating Landsat and RapidEye data for winter wheat mapping and area estimation in Punjab, Pakistan, Remote Sens., 10, 489, https://doi.org/10.3390/rs10040489, 2018.

Khan, A., Hansen, M. C., Potapov, P., Adusei, B., Stehman, S. V., and Steininger, M. K.: An operational automated mapping algorithm for in-season estimation of wheat area for Punjab, Pakistan, Int. J. Remote Sens., 42, 3833–3849, https://doi.org/10.1080/01431161.2021.1883200, 2021.

King, L., Adusei, B., Stehman, S. V., Potapov, P. V., Song, X.-P., Krylov, A., Di Bella, C., Loveland, T. R., Johnson, D. M., and Hansen, M. C.: A multi-resolution approach to national-scale cultivated area estimation of soybean, Remote Sens. Environ., 195, 13–29, https://doi.org/10.1016/j.rse.2017.03.047, 2017.

Konduri, V. S., Kumar, J., Hargrove, W. W., Hoffman, F. M., and Ganguly, A. R.: Mapping crops within the growing season across the United States, Remote Sens. Environ., 251, https://doi.org/10.1016/j.rse.2020.112048, 2020.

Lambert, M.-J., Traoré, P. C. S., Blaes, X., Baret, P., and Defourny, P.: Estimating smallholder crops production at village level from Sentinel-2 time series in Mali's cotton belt, Remote Sens. Environ., 216, 647–657, https://doi.org/10.1016/j.rse.2018.06.036, 2018.

Lark, T. J., Spawn, S. A., Bougie, M., Gibbs, H. K., Lark, T. J., Spawn, S. A., Bougie, M., and Gibbs, H. K.: Cropland expansion in the United States produces marginal yields at high costs to wildlife, Nat. Commun., 11, https://doi.org/10.1038/s41467-020-18045-z, 2020.

Larsen, A. E., Hendrickson, B. T., Dedeic, N., and MacDonald, A. J.: Taken as a given: Evaluating the accuracy of remotely sensed crop data in the USA, Agr. Syst., 141, 121–125, https://doi.org/10.1016/j.agsy.2015.10.008, 2015.

Li, H., Song, X.-P., Hansen, M. C., Becker-Reshef, I., Adusei, B., Pickering, J., Wang, L., Wang, L., Lin, Z., Zalles, V., Potapov, P., Stehman, S. V., and Justice, C.: Development of a 10-m resolution maize and soybean map over China: Matching satellite-based crop classification with sample-based area estimation, Remote Sens. Environ., 294, https://doi.org/10.1016/j.rse.2023.113623, 2023.

Li, H., Song, X.-P., Adusei, B., Pickering, J., Lima, A., Poulson, A., Baggett, A., Potapov, P., Khan, A., Zalles, V., Hernandez-Serna, A., Jantz, S. M., Pickens, A. H., Ortiz-Dominguez, C., Li, X., Kerr, T., Song, Z., Turubanova, S., Bongwele, E., Koy Kondjo, H., Komarova, A., Stehman, S. V., and Hansen, M. C.: 2019–2022 10-m maize and soybean maps over the United States, FigShare [data set], https://doi.org/10.6084/m9.figshare.28934993.v2, 2025.

Li, X.-Y., Li, X., Fan, Z., Mi, L., Kandakji, T., Song, Z., Li, D., and Song, X.-P.: Civil war hinders crop production and threatens food security in Syria, Nature Food, 3, 38–46, https://doi.org/10.1038/s43016-021-00432-4, 2022.

Lin, C., Zhong, L., Song, X.-P., Dong, J., Lobell, D. B., and Jin, Z.: Early- and in-season crop type mapping without current-year ground truth: Generating labels from historical information via a topology-based approach, Remote Sens. Environ., 274, https://doi.org/10.1016/j.rse.2022.112994, 2022.

Lin, F., Li, X., Jia, N., Feng, F., Huang, H., Huang, J., Fan, S., Ciais, P., and Song, X.-P.: The impact of Russia-Ukraine conflict on global food security, Glob. Food Secur., 36, 100661, https://doi.org/10.1016/j.gfs.2022.100661, 2023.

Lobell, D. B., Deines, J. M., and Tommaso, S. D.: Changes in the drought sensitivity of US maize yields, Nature Food, 1, 729–735, https://doi.org/10.1038/s43016-020-00165-w, 2020.

Lowder, S. K., Skoet, J., and Raney, T.: The number, size, and distribution of farms, smallholder farms, and family farms worldwide, World Dev., 87, 16–29, https://doi.org/10.1016/j.worlddev.2015.10.041, 2016.

Luo, Y., Zhang, Z., Zhang, L., Han, J., Cao, J., and Zhang, J.: Developing high-resolution crop maps for major crops in the European Union based on transductive transfer learning and limited ground data, Remote Sens., 14, 1809, https://doi.org/10.3390/rs14081809, 2022.

Malingreau, J.-P.: Global vegetation dynamics: Satellite observations over Asia, Int. J. Remote Sens., 7, 1121–1146, https://doi.org/10.1080/01431168608948914, 1986.

Manoochehr, S., Khoshmanesh, M., Ojha, C., Werth, S., Kerner, H., Carlson, G., Sherpa, S. F., Zhai, G., and Lee, J.-C.: Persistent impact of spring floods on crop loss in U.S. Midwest, Weather and Climate Extremes, 34, https://doi.org/10.1016/j.wace.2021.100392, 2021.

Marin, F. R., Zanon, A. J., Monzon, J. P., Andrade, J. F., Silva, E. H. F. M., Richter, G. L., Antolin, L. A. S., Ribeiro, B. S. M. R., Ribas, G. G., Battisti, R., Heinemann, A. B., and Grassini, P.: Protecting the Amazon forest and reducing global warming via agricultural intensification, Nature Sustainability, 1–9, https://doi.org/10.1038/s41893-022-00968-8, 2022.

Massey, R., Sankey, T. T., Congalton, R. G., Yadav, K., Thenkabail, P. S., Ozdogan, M., and Sánchez Meador, A. J.: MODIS phenology-derived, multi-year distribution of conterminous U.S. crop types, Remote Sens. Environ., 198, 490–503, https://doi.org/10.1016/j.rse.2017.06.033, 2017.

Mei, Q., Zhang, Z., Han, J., Song, J., Dong, J., Wu, H., Xu, J., and Tao, F.: ChinaSoyArea10m: a dataset of soybean-planting areas with a spatial resolution of 10 m across China from 2017 to 2021, Earth Syst. Sci. Data, 16, 3213–3231, https://doi.org/10.5194/essd-16-3213-2024, 2024.

Nafziger, E.: Early-season soybean management for 2019, https://farmdoc.illinois.edu/field-crop-production/crop_production/early-season-soybean-management-for-2019.html, last access: 20 June 2024.

NASS CDL: CropScape – NASS CDL Program, https://www.nass.usda.gov/Research_and_Science/Cropland/Release/index.php (last access: 26 December 2025), 2025.

NASS CPR: Crop Progress Report, https://www.nass.usda.gov/Publications/National_Crop_Progress/, last access: 20 June 2024.

NASS CSB: Crop Sequence Boundaries (CSB), https://www.nass.usda.gov/Research_and_Science/Crop-Sequence-Boundaries/index.php (last access: 26 December 2025), 2024.

Olofsson, P., Foody, G. M., Stehman, S. V., and Woodcock, C. E.: Making better use of accuracy data in land change studies: Estimating accuracy and area and quantifying uncertainty using stratified estimation, Remote Sens. Environ., 129, 122–131, https://doi.org/10.1016/j.rse.2012.10.031, 2013.

Ouyang, Z., Jackson, R. B., McNicol, G., Fluet-Chouinard, E., Runkle, B. R. K., Papale, D., Knox, S. H., Cooley, S., Delwiche, K. B., Feron, S., Irvin, J. A., Malhotra, A., Muddasir, M., Sabbatini, S., Alberto, M. C. R., Cescatti, A., Chen, C.-L., Dong, J., Fong, B. N., Guo, H., Hao, L., Iwata, H., Jia, Q., Ju, W., Kang, M., Li, H., Kim, J., Reba, M. L., Nayak, A. K., Roberti, D. R., Ryu, Y., Swain, C. K., Tsuang, B., Xiao, X., Yuan, W., Zhang, G., and Zhang, Y.: Paddy rice methane emissions across Monsoon Asia, Remote Sens. Environ., 284, 113335, https://doi.org/10.1016/j.rse.2022.113335, 2023.

Ozdogan, M. and Woodcock, C. E.: Resolution dependent errors in remote sensing of cultivated areas, Remote Sens. Environ., 103, 203–217, https://doi.org/10.1016/j.rse.2006.04.004, 2006.

Potapov, P., Hansen, M. C., Kommareddy, I., Kommareddy, A., Turubanova, S., Pickens, A., Adusei, B., Tyukavina, A., and Ying, Q.: Landsat analysis ready data for global land cover and land cover change mapping, Remote Sens., 12, 426, https://doi.org/10.3390/rs12030426, 2020.

Potapov, P., Turubanova, S., Hansen, M. C., Tyukavina, A., Zalles, V., Khan, A., Song, X.-P., Pickens, A., Shen, Q., and Cortez, J.: Global maps of cropland extent and change show accelerated cropland expansion in the twenty-first century, Nature Food, 3, 19–28, https://doi.org/10.1038/s43016-021-00429-z, 2021a.

Potapov, P., Li, X., Hernandez-Serna, A., Tyukavina, A., Hansen, M. C., Kommareddy, A., Pickens, A., Turubanova, S., Tang, H., Silva, C. E., Armston, J., Dubayah, R., Blair, J. B., and Hofton, M.: Mapping global forest canopy height through integration of GEDI and Landsat data, Remote Sens. Environ., 253, https://doi.org/10.1016/j.rse.2020.112165, 2021b.

Probst, P., Wright, M. N., and Boulesteix, A.-L.: Hyperparameters and tuning strategies for random forest, WIRes Data Min. Knowl., 9, e1301, https://doi.org/10.1002/widm.1301, 2019.

Remelgado, R., Zaitov, S., Kenjabaev, S., Stulina, G., Sultanov, M., Ibrakhimov, M., Akhmedov, M., Dukhovny, V., and Conrad, C.: A crop type dataset for consistent land cover classification in Central Asia, Scientific Data, 7, 250, https://doi.org/10.1038/s41597-020-00591-2, 2020.

Roy, D. P., Li, Z., and Zhang, K. H.: Adjustment of Sentinel-2 Multi-Spectral Instrument (MSI) red-edge band reflectance to nadir BRDF adjusted reflectance (NBAR) and quantification of red-edge band BRDF Effects, Remote Sens., 9, https://doi.org/10.3390/rs9121325, 2017a.

Roy, D. P., Li, J., Zhang, H. K., Yan, L., Huang, H., and Li, Z.: Examination of Sentinel-2A multi-spectral instrument (MSI) reflectance anisotropy and the suitability of a general method to normalize MSI reflectance to nadir BRDF adjusted reflectance, Remote Sens. Environ., 199, 25–38, https://doi.org/10.1016/j.rse.2017.06.019, 2017b.

Som-ard, J., Immitzer, M., Vuolo, F., Ninsawat, S., and Atzberger, C.: Mapping of crop types in 1989, 1999, 2009 and 2019 to assess major land cover trends of the Udon Thani Province, Thailand, Comput. Electron. Agr., 198, 107083, https://doi.org/10.1016/j.compag.2022.107083, 2022.

Song, X. P., Hansen, M. C., Stehman, S. V., Potapov, P. V., Tyukavina, A., Vermote, E. F., and Townshend, J. R.: Global land change from 1982 to 2016, Nature, 560, 639–643, https://doi.org/10.1038/s41586-018-0411-9, 2018.

Song, X.-P., Potapov, P. V., Krylov, A., King, L., Di Bella, C. M., Hudson, A., Khan, A., Adusei, B., Stehman, S. V., and Hansen, M. C.: National-scale soybean mapping and area estimation in the United States using medium resolution satellite imagery and field survey, Remote Sens. Environ., 190, 383–395, https://doi.org/10.1016/j.rse.2017.01.008, 2017.

Song, X.-P., Huang, W., Hansen, M. C., and Potapov, P.: An evaluation of Landsat, Sentinel-2, Sentinel-1 and MODIS data for crop type mapping, Sci. Remote Sens., 3, 100018, https://doi.org/10.1016/j.srs.2021.100018, 2021a.

Song, X.-P., Hansen, M. C., Potapov, P., Adusei, B., Pickering, J., Adami, M., Lima, A., Zalles, V., Stehman, S. V., Di Bella, C. M., Conde, M. C., Copati, E. J., Fernandes, L. B., Hernandez-Serna, A., Jantz, S. M., Pickens, A. H., Turubanova, S., and Tyukavina, A.: Massive soybean expansion in South America since 2000 and implications for conservation, Nature Sustainability, 4, 784–792, https://doi.org/10.1038/s41893-021-00729-z, 2021b.

Song, X.-P., Li, H., Potapov, P., and Hansen, M. C.: Annual 30 m soybean yield mapping in Brazil using long-term satellite observations, climate data and machine learning, Agr. Forest Meteorol., 326, https://doi.org/10.1016/j.agrformet.2022.109186, 2022.

Stehman, S. V.: Estimating area and map accuracy for stratified random sampling when the strata are different from the map classes, Int. J. Remote Sens., 35, 4923–4939, https://doi.org/10.1080/01431161.2014.930207, 2014.

Tanaka, T., Sun, L., Becker-Reshef, I., Song, X.-P., and Puricelli, E.: Satellite forecasting of crop harvest can trigger a cross-hemispheric production response and improve global food security, Commun. Earth Environ., 4, 334, https://doi.org/10.1038/s43247-023-00992-2, 2023.

Tucker, C. J.: Red and photographic infrared linear combinations for monitoring vegetation, Remote Sens. Environ., 8, 127–150, https://doi.org/10.1016/0034-4257(79)90013-0, 1979.

US Census Bureau: TIGER/Line Shapefiles, https://www.census.gov/geographies/mapping-files/time-series/geo/tiger-line-file.html (last access: 26 December 2025), 2025.

USDA NASS: Quick stats, https://www.nass.usda.gov/Quick_Stats/ (last access: 26 December 2025), 2025.

Van Tricht, K., Degerickx, J., Gilliams, S., Zanaga, D., Battude, M., Grosu, A., Brombacher, J., Lesiv, M., Bayas, J. C. L., Karanam, S., Fritz, S., Becker-Reshef, I., Franch, B., Mollà-Bononad, B., Boogaard, H., Pratihast, A. K., Koetz, B., and Szantoi, Z.: WorldCereal: a dynamic open-source system for global-scale, seasonal, and reproducible crop and irrigation mapping, Earth Syst. Sci. Data, 15, 5491–5515, https://doi.org/10.5194/essd-15-5491-2023, 2023.

Wang, S., Azzari, G., and Lobell, D. B.: Crop type mapping without field-level labels: Random forest transfer and unsupervised clustering techniques, Remote Sens. Environ., 222, 303–317, https://doi.org/10.1016/j.rse.2018.12.026, 2019.

Wang, S., Di Tommaso, S., Deines, J. M., and Lobell, D. B.: Mapping twenty years of corn and soybean across the US Midwest using the Landsat archive, Scientific Data, 7, 307, https://doi.org/10.1038/s41597-020-00646-4, 2020.

Wang, Y., Feng, K., Sun, L., Xie, Y., and Song, X.-P.: Satellite-based soybean yield prediction in Argentina: A comparison between panel regression and deep learning methods, Comput. Electron. Agr., 221, 108978, https://doi.org/10.1016/j.compag.2024.108978, 2024.

Wardlow, B. D. and Egbert, S. L.: Large-area crop mapping using time-series MODIS 250 m NDVI data: An assessment for the U.S. Central Great Plains, Remote Sens. Environ., 112, 1096–1116, https://doi.org/10.1016/j.rse.2007.07.019, 2008.

Wardlow, B. D., Egbert, S. L., and Kastens, J. H.: Analysis of time-series MODIS 250 m vegetation index data for crop classification in the U.S. Central Great Plains, Remote Sens. Environ., 108, 290–310, https://doi.org/10.1016/j.rse.2006.11.021, 2007.

Woodcock, C. E., Allen, R., Anderson, M., Belward, A., Bindschadler, R., Cohen, W., Gao, F., Goward, S. N., Helder, D., Helmer, E., Nemani, R., Oreopoulos, L., Schott, J., Thenkabail, P. S., Vermote, E. F., Vogelmann, J., Wulder, M. A., and Wynne, R.: Free access to Landsat imagery, Science, 320, 1011, https://doi.org/10.1126/science.320.5879.1011a, 2008.

Wright, C. K. and Wimberly, M. C.: Recent land use change in the Western Corn Belt threatens grasslands and wetlands, P. Natl. Acad. Sci., 110, 4134–4139, https://doi.org/10.1073/pnas.1215404110, 2013.

Xiong, J., Thenkabail, P. S., Gumma, M. K., Teluguntla, P., Poehnelt, J., Congalton, R. G., Yadav, K., and Thau, D.: Automated cropland mapping of continental Africa using Google Earth Engine cloud computing, ISPRS J. Photogramm., 126, 225–244, https://doi.org/10.1016/j.isprsjprs.2017.01.019, 2017.

Yan, L. and Roy, D. P.: Automated crop field extraction from multi-temporal Web Enabled Landsat Data, Remote Sens. Environ., 144, 42–64, https://doi.org/10.1016/j.rse.2014.01.006, 2014.

Yan, L. and Roy, D. P.: Conterminous United States crop field size quantification from multi-temporal Landsat data, Remote Sens. Environ., 172, 67–86, https://doi.org/10.1016/j.rse.2015.10.034, 2016.

Yang, Y., Wilson, L. T., and Wang, J.: A spatially explicit crop planting initiation and progression model for the conterminous United States, Eur. J. Agron., 90, 184–197, https://doi.org/10.1016/j.eja.2017.08.004, 2017.

Yang, Z., Diao, C., and Gao, F.: Towards scalable within-season crop mapping with phenology normalization and deep learning, IEEE J. Sel. Top. Appl., 16, 1390–1402, https://doi.org/10.1109/JSTARS.2023.3237500, 2023.

You, N., Dong, J., Huang, J., Du, G., Zhang, G., He, Y., Yang, T., Di, Y., and Xiao, X.: The 10-m crop type maps in Northeast China during 2017–2019, Scientific Data, 8, 41, https://doi.org/10.1038/s41597-021-00827-9, 2021.

You, N., Dong, J., Li, J., Huang, J., and Jin, Z.: Rapid early-season maize mapping without crop labels, Remote Sens. Environ., 290, https://doi.org/10.1016/j.rse.2023.113496, 2023.

Zalles, V., Hansen, M. C., Potapov, P. V., Stehman, S. V., Tyukavina, A., Pickens, A., Song, X. P., Adusei, B., Okpa, C., Aguilar, R., John, N., and Chavez, S.: Near doubling of Brazil's intensive row crop area since 2000, P. Natl. Acad. Sci., 116, 428–435, https://doi.org/10.1073/pnas.1810301115, 2019.

Zalles, V., Hansen, M. C., Potapov, P. V., Parker, D., Stehman, S. V., Pickens, A. H., Parente, L. L., Ferreira, L. G., Song, X.-P., Hernandez-Serna, A., and Kommareddy, I.: Rapid expansion of human impact on natural land in South America since 1985, Sci. Adv., 7, eabg1620, https://doi.org/10.1126/sciadv.abg1620, 2021.

Zhong, L., Gong, P., and Biging, G. S.: Efficient corn and soybean mapping with temporal extendability: A multi-year experiment using Landsat imagery, Remote Sens. Environ., 140, 1–13, https://doi.org/10.1016/j.rse.2013.08.023, 2014.

Zhu, Z., Wang, S., and Woodcock, C. E.: Improvement and expansion of the Fmask algorithm: Cloud, cloud shadow, and snow detection for Landsats 4–7, 8, and Sentinel 2 images, Remote Sens. Environ., 159, 269–277, https://doi.org/10.1016/j.rse.2014.12.014, 2015.